Introducing R and RStudio IDE

Overview

Teaching: 30 min

Exercises: 15 minQuestions

Why use R?

Why use RStudio and how does it differ from R?

Objectives

Know advantages of analyzing data in R

Know advantages of using RStudio

Create an RStudio project, and know the benefits of working within a project

Be able to customize the RStudio layout

Be able to locate and change the current working directory with

getwd()andsetwd()Compose an R script file containing comments and commands

Understand what an R function is

Locate help for an R function using

?,??, andargs()

Getting ready to use R for the first time

In this lesson we will take you through the very first things you need to get R working.

A Brief History of R

R has been around since 1995, and was created by Ross Ihaka and Robert Gentleman at the University of Auckland, New Zealand. R is based off the S programming language developed at Bell Labs and was developed to teach intro statistics. See this slide deck by Ross Ihaka for more info on the subject.

Advantages of using R

At more than 20 years old, R is fairly mature and growing in popularity. However, programming isn’t a popularity contest. Here are key advantages of analyzing data in R:

-

R is open source. This means R is free - an advantage if you are at an institution where you have to pay for your own MATLAB or SAS license. Open source, is important to your colleagues in parts of the world where expensive software is inaccessible. Being open source it also means that R is actively developed by a community (see r-project.org), and there are regular updates.

-

R is widely used. Ok, maybe programming is a popularity contest. Because, R is used in many areas (not just bioinformatics), you are more likely to find help online when you need it. Chances are, almost any error message you run into, someone else has already experienced.

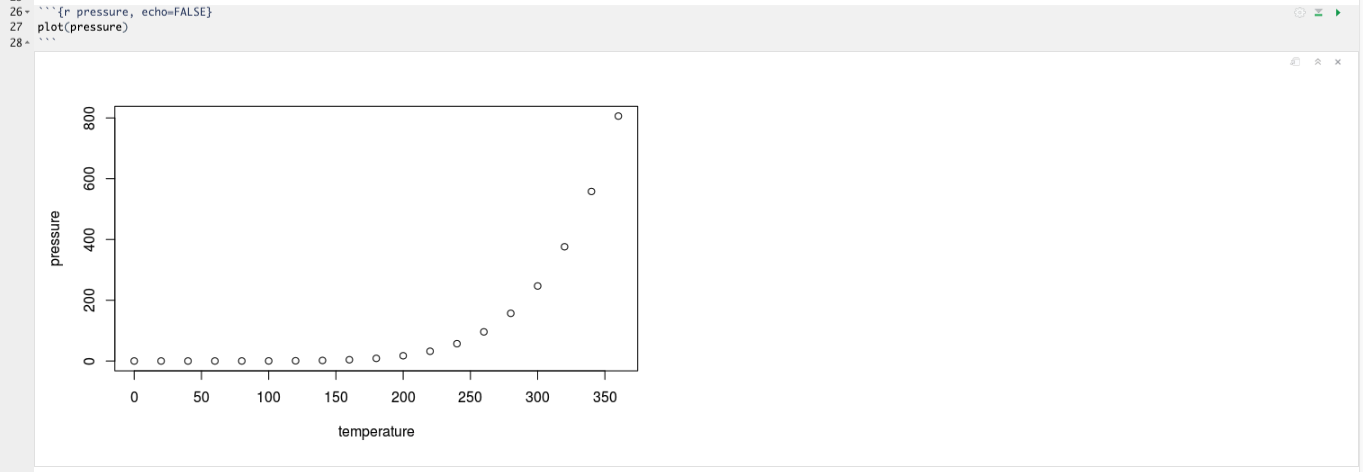

-

R is powerful. R runs on multiple platforms (Windows/MacOS/Linux). It can work with much larger datasets than popular spreadsheet programs like Microsoft Excel, and because of its scripting capabilities is far more reproducible. Also, there are thousands of available software packages for science, including genomics and other areas of life science.

Discussion: Your experience

What has motivated you to learn R? Have you had a research question for which spreadsheet programs such as Excel have proven difficult to use, or where the size of the data set created issues?

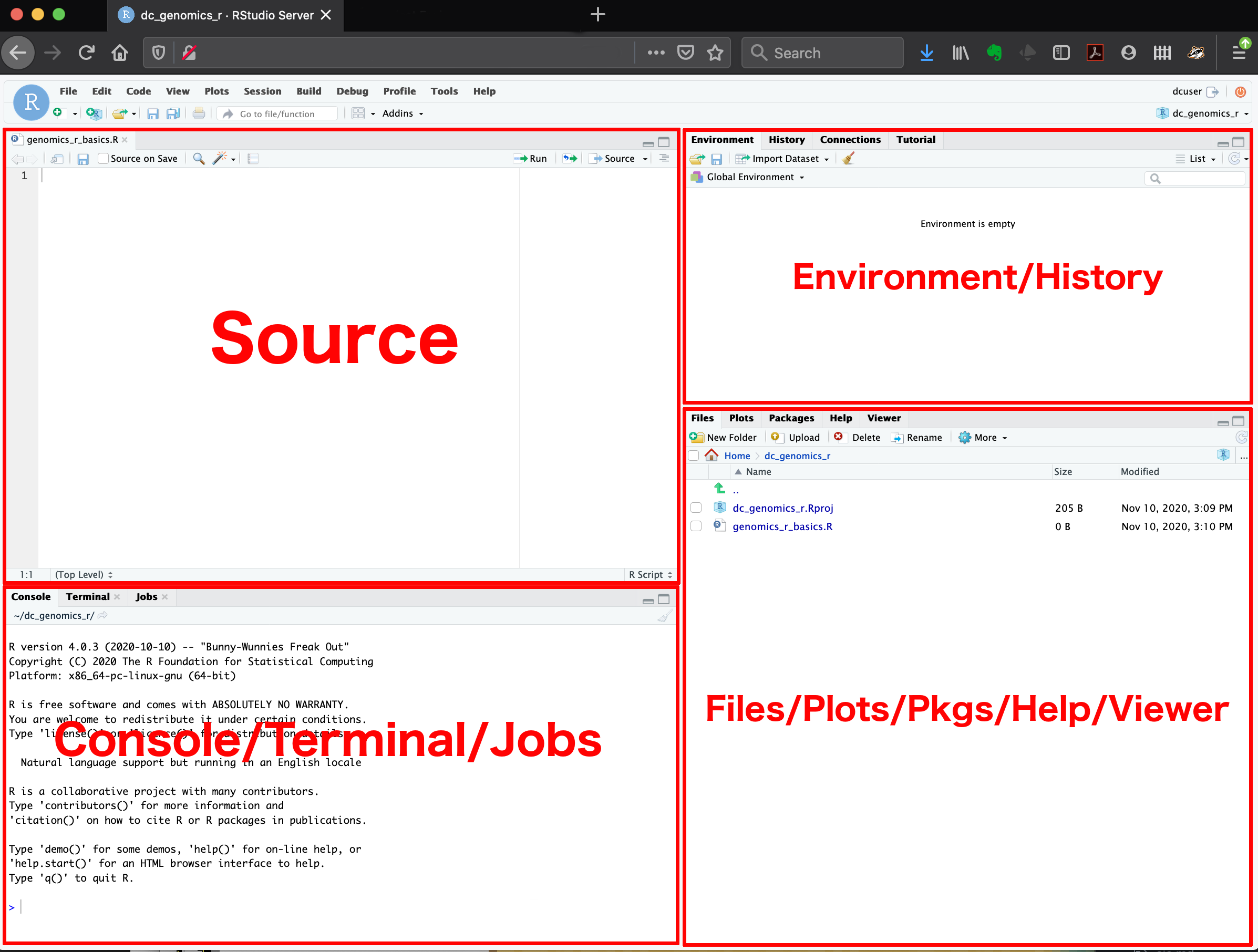

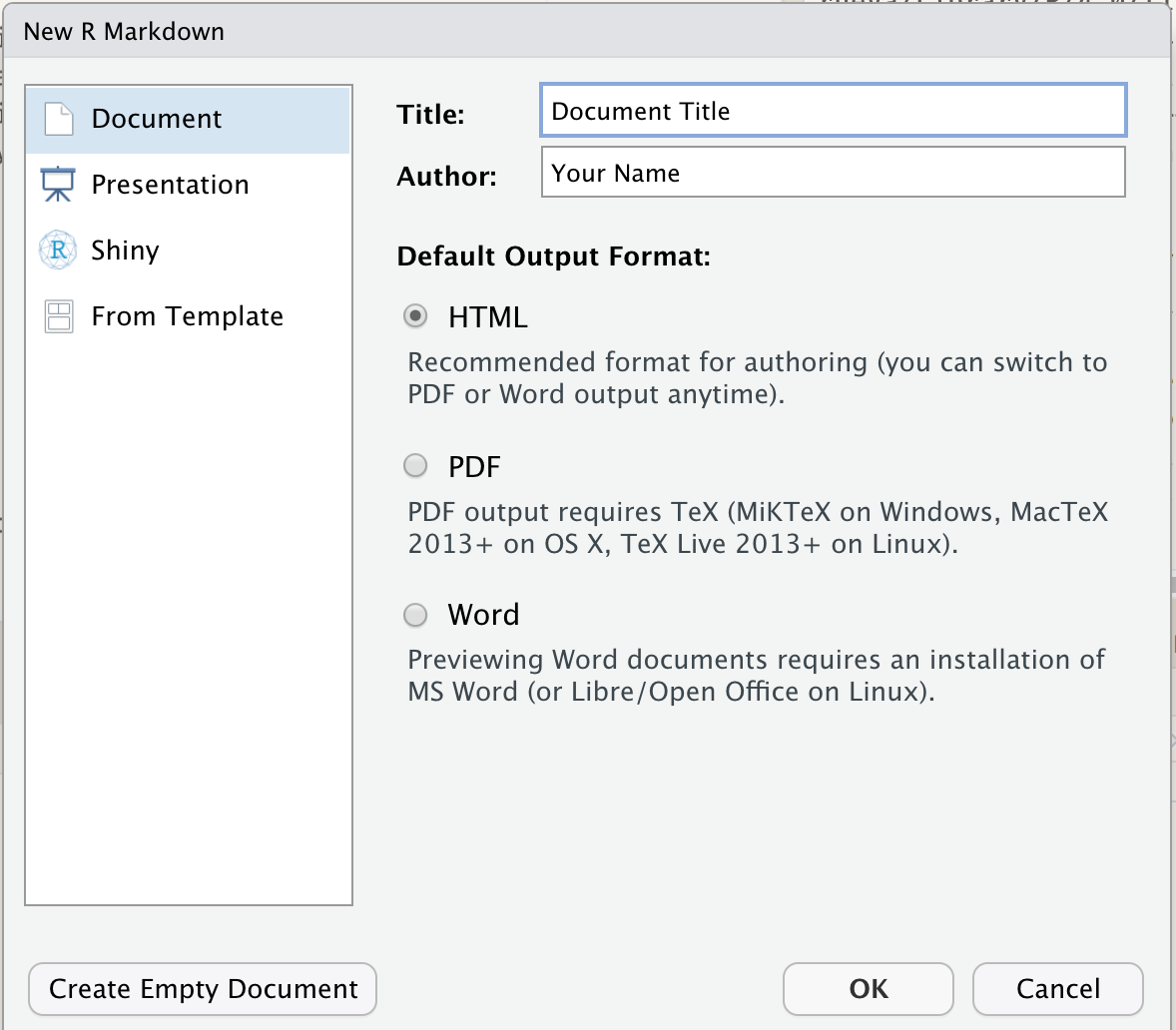

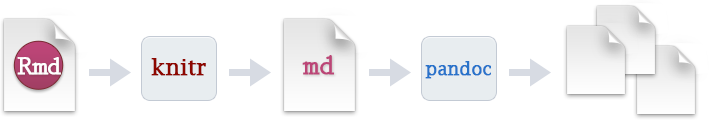

Introducing RStudio

In these lessons, we will be making use of a software called RStudio, an Integrated Development Environment (IDE). RStudio, like most IDEs, provides a graphical interface to R, making it more user-friendly, and providing dozens of useful features. We will introduce additional benefits of using RStudio as you cover the lessons.

What about this R app should I use that?

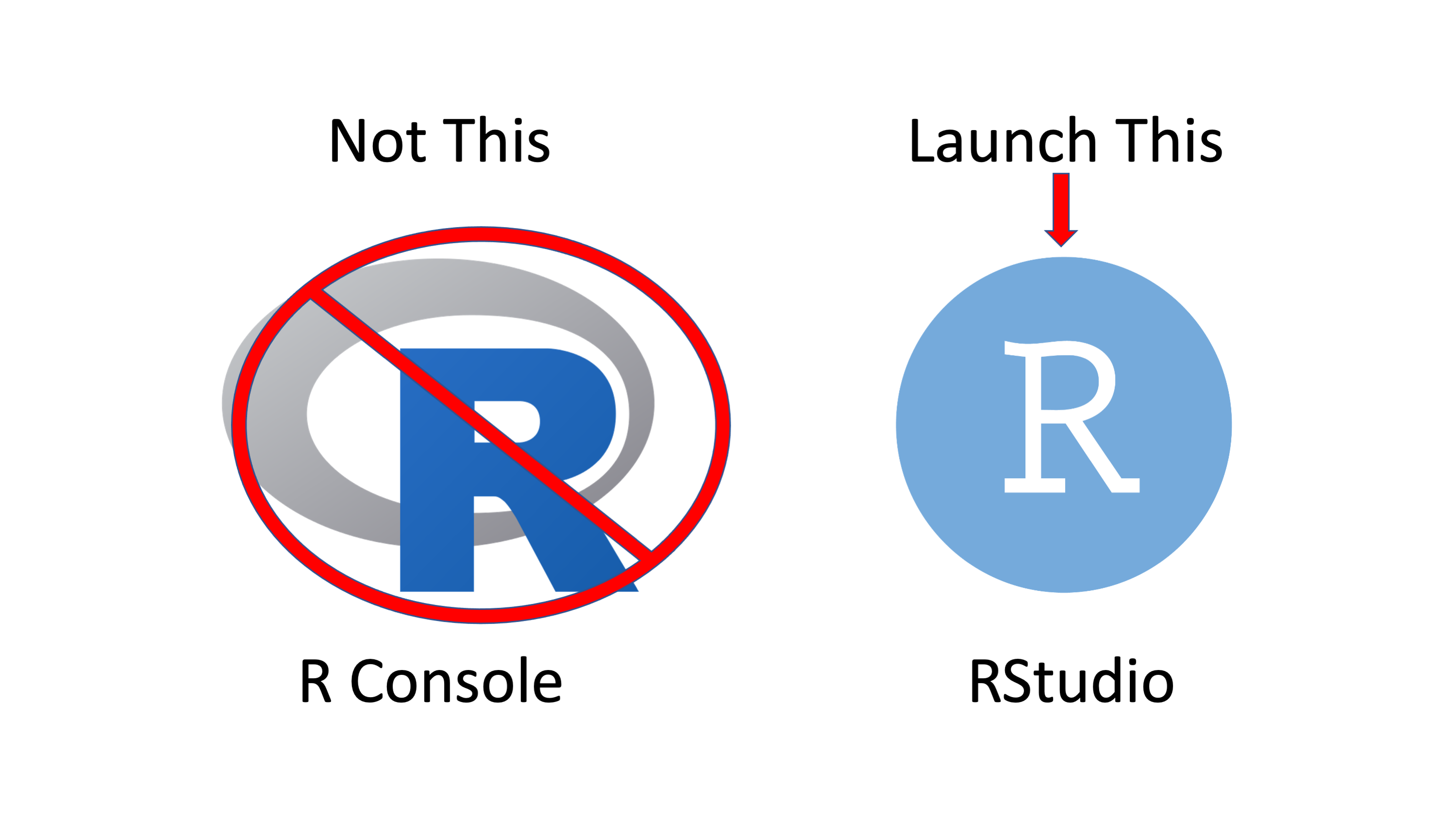

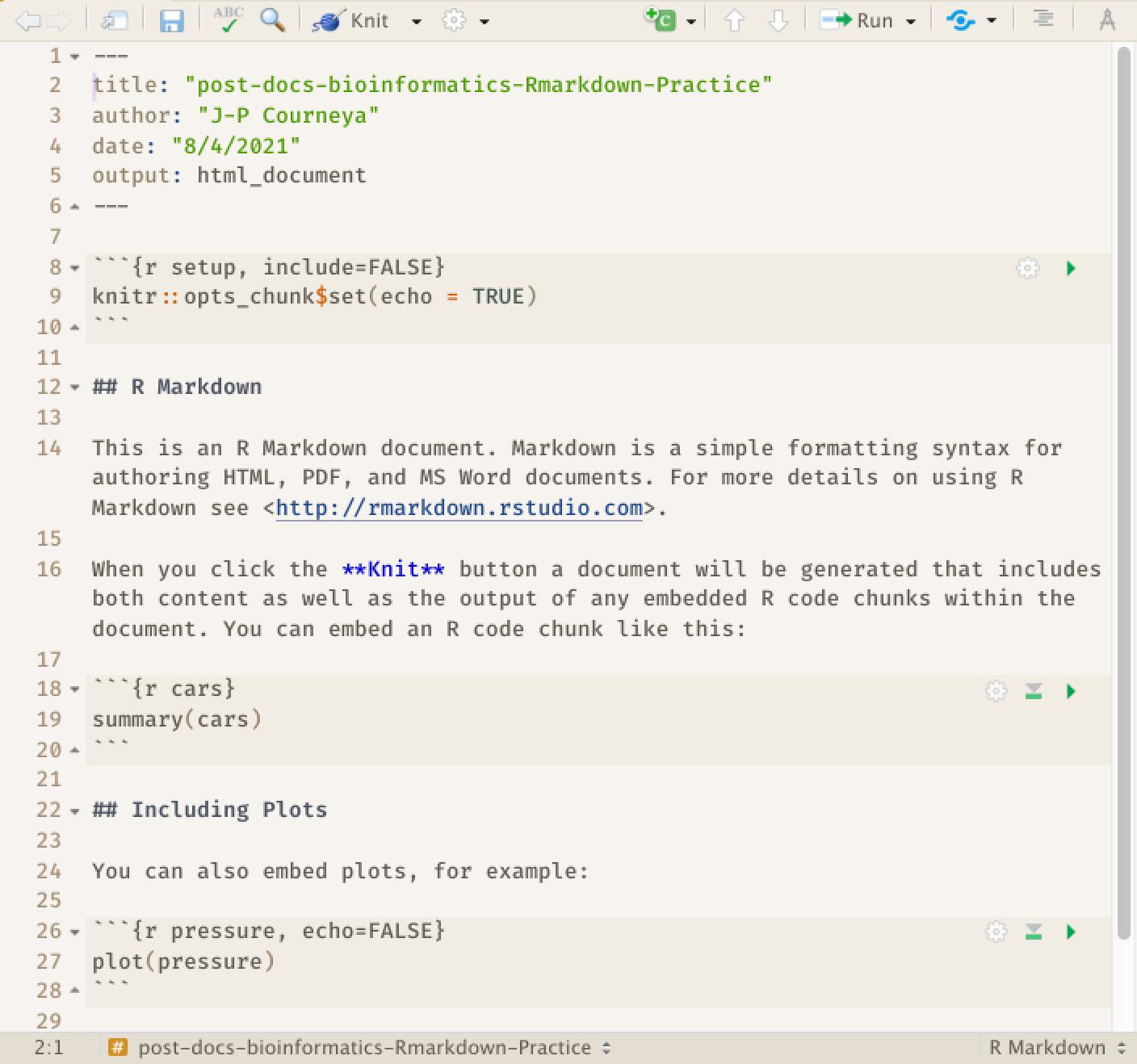

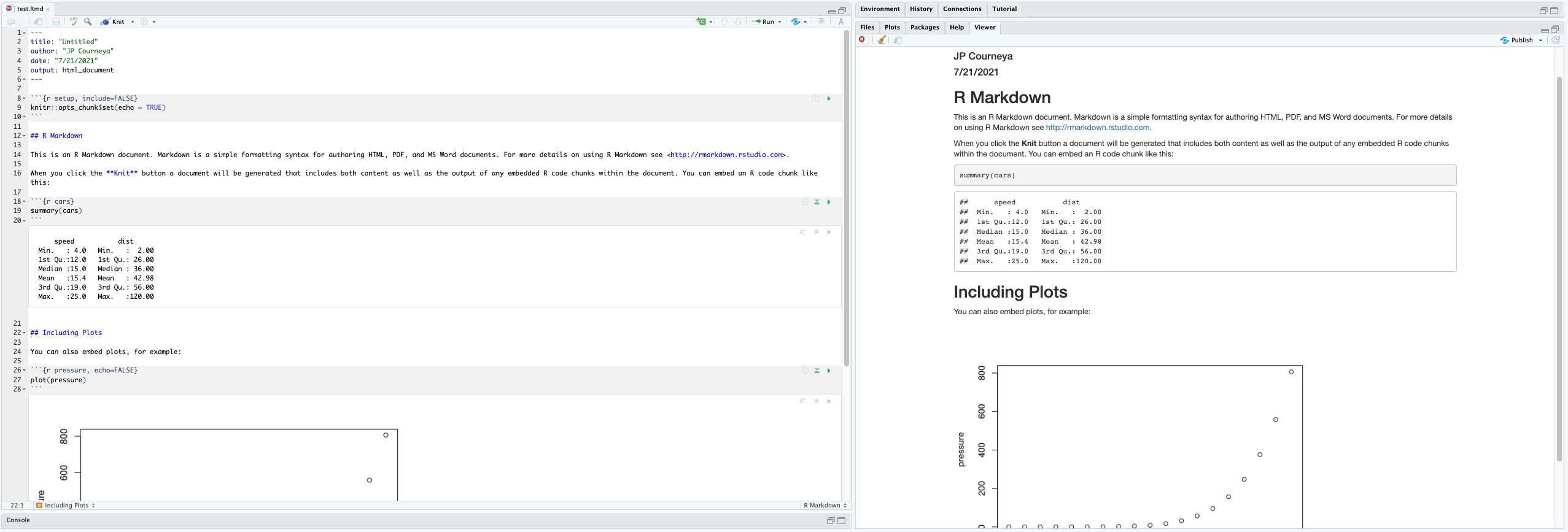

Depending on what operating system youre using you will notice in your programs or application menu that there is R Studio and another software that is also R. Currently application icons look like this:

In case you end up launching R and not R Studio you will see an application window that looks like this:

Go ahead and close it out. While there is nothing inherently wrong with using this. The features and benefits RStudio provides for programming in R significantly outweigh those of using the R console. Its recommended that you use RStudio.

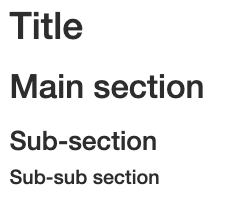

Overview and customization of the RStudio layout

Here are the major windows (or panes) of the RStudio environment:

- Source: This pane is where you will write/view R scripts. Some outputs

(such as if you view a dataset using

View()) will appear as a tab here. - Console/Terminal/Jobs: This is actually where you see the execution of commands. This is the same display you would see if you were using R at the command line without RStudio. You can work interactively (i.e. enter R commands here), but for the most part we will run a script (or lines in a script) in the source pane and watch their execution and output here. The “Terminal” tab give you access to the BASH terminal (the Linux operating system, unrelated to R). RStudio also allows you to run jobs (analyses) in the background. This is useful if some analysis will take a while to run. You can see the status of those jobs in the background.

- Environment/History: Here, RStudio will show you what datasets and objects (variables) you have created and which are defined in memory. You can also see some properties of objects/datasets such as their type and dimensions. The “History” tab contains a history of the R commands you’ve executed R.

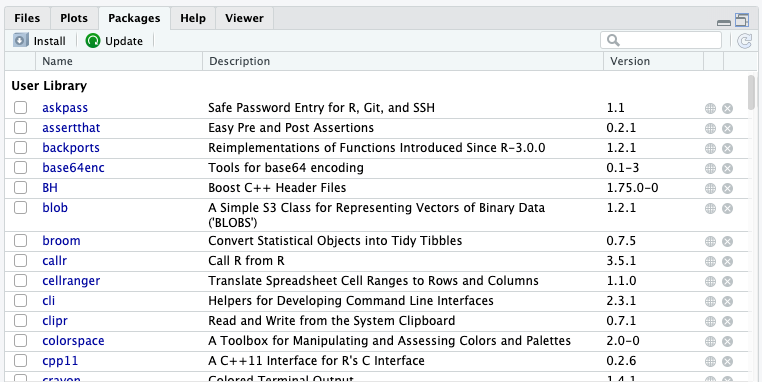

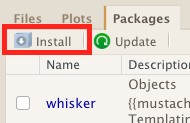

- Files/Plots/Packages/Help/Viewer: This multipurpose pane will show you the contents of directories on your computer. You can also use the “Files” tab to navigate and set the working directory. The “Plots” tab will show the output of any plots generated. In “Packages” you will see what packages are actively loaded, or you can attach installed packages. “Help” will display help files for R functions and packages. “Viewer” will allow you to view local web content (e.g. HTML outputs).

All of the panes in RStudio have configuration options. For example, you can minimize/maximize a pane, or by moving your mouse in the space between panes you can resize as needed. The most important customization options for pane layout are in the View menu. Other options such as font sizes, colors/themes, and more are in the Tools menu under Global Options.

You are working with R

Although we won’t be working with R at the terminal, there are lots of reasons to. For example, once you have written an RScript, you can run it at any Linux or Windows terminal without the need to start up RStudio. We don’t want you to get confused - RStudio runs R, but R is not RStudio. For more on running an R Script at the terminal see this Software Carpentry lesson.

Create an RStudio project

One of the first benefits we will take advantage of in RStudio is something called an RStudio Project. An RStudio project allows you to more easily:

- Save data, files, variables, packages, etc. related to a specific analysis project

- Restart work where you left off

- Collaborate, especially if you are using version control such as git.

-

To create a project, go to the File menu, and click New Project....

-

In the window that opens select New Directory, then New Project. For “Directory name:” enter post-docs_genomics_r. For “Create project as subdirectory of”, click Browse... and then click Choose which will select your

DocumentsorDesktopdirectory. -

Finally click Create Project. In the “Files” tab of your output pane (more about the RStudio layout in a moment), you should see an RStudio project file, post-docs_genomics_r.Rproj. All RStudio projects end with the “.Rproj” file extension.

Now when we start R in this project directory, or open this project with RStudio, all of our work on this project will be entirely self-contained in this directory.

Best practices for project organization

Although there is no “best” way to lay out a project, there are some general principles to adhere to that will make project management easier:

Treat data as read only

This is probably the most important goal of setting up a project. Data is typically time consuming and/or expensive to collect. Working with them interactively (e.g., in Excel) where they can be modified means you are never sure of where the data came from, or how it has been modified since collection. It is therefore a good idea to treat your data as “read-only”.

Data Cleaning

In many cases your data will be “dirty”: it will need significant preprocessing to get into a format R (or any other programming language) will find useful. This task is sometimes called “data munging”. I find it useful to store these scripts in a separate folder, and create a second “read-only” data folder to hold the “cleaned” data sets.

Treat generated output as disposable

Anything generated by your scripts should be treated as disposable: it should all be able to be regenerated from your scripts.

There are lots of different ways to manage this output. I find it useful to have an output folder with different sub-directories for each separate analysis. This makes it easier later, as many of my analyses are exploratory and don’t end up being used in the final project, and some of the analyses get shared between projects.

Tip: Good Enough Practices for Scientific Computing

Good Enough Practices for Scientific Computing gives the following recommendations for project organization:

- Put each project in its own directory, which is named after the project.

- Put text documents associated with the project in the

docdirectory.- Put raw data and metadata in the

datadirectory, and files generated during cleanup and analysis in aresultsdirectory.- Put source for the project’s scripts and programs in the

srcdirectory, and programs brought in from elsewhere or compiled locally in thebindirectory.- Name all files to reflect their content or function.

Now that you have been presented some practices to keep your data projects in R organized lets put the practice in to action.

Exercise: Create sub-directories for your project.

A) Create a

docdirectory to store text documents.B) Create a

datadirectory for raw data and metadataC) Create a

resultsdirectory for files generated during cleanup and analysisD) Create a

srcdirectory for your scripts.Solution

A) Using the RStudio Files tab in

pane 4click the New Folder button. We will call the folder:docB) Using the RStudio Files tab in

pane 4click the New Folder button. We will call the folder:dataC) Using the RStudio Files tab in

pane 4click the New Folder button. We will call the folder:resultsD) Using the RStudio Files tab in

pane 4click the New Folder button. We will call the folder:src

Save some data in the data directory

Now we have a good directory structure we will now place/save data files in the data/ directory.

Challenge 1

Download the

combined_tidy_vcfdata from here.

- Download the file

- Make sure it’s saved under the name

combined_tidy_vcf.csv- Save the file in the

data/folder within your project.We will load and inspect these data later.

Creating your first R script

Now that we are ready to start exploring R, we will want to keep a record of the commands we are using. To do this we can create an R script:

Click the File menu and select New File and then R Script. Before we go any further, save your script by clicking the save/disk icon that is in the bar above the first line in the script editor, or click the File menu and select save. In the “Save File” window that opens, name your file “genomics_r_basics”. The new script genomics_r_basics.R should appear under “files” in the output pane. By convention, R scripts end with the file extension .R.

Getting to work with R: navigating directories

Now that we have covered the more aesthetic aspects of RStudio, we can get to work using some commands. We will write, execute, and save the commands we learn in our genomics_r_basics.R script that is loaded in the Source pane. First, lets see what directory we are in. To do so, type the following command into the script:

getwd()

To execute this command, make sure your cursor is on the same line the command is written. Then click the Run button that is just above the first line of your script in the header of the Source pane.

In the console, we expect to see the following output:

[1] "~/Documents/post-docs_genomics_r"

* Notice, at the Console, you will also see the instruction you executed above the output in blue.

Since we will be learning several commands, we may already want to keep some

short notes in our script to explain the purpose of the command. Entering a # , or using the keyboard short cut Cntrl + Shift + C, before any line in an R script turns that line into a comment, which R will not try to interpret as code. Edit your script to include a comment on the purpose of commands you are learning, e.g.:

# this command shows the current working directory

getwd()

Exercise: Work interactively in R

What happens when you try to enter the

getwd()command in the Console pane?Solution

You will get the same output you did as when you ran

getwd()from the source. You can run any command in the Console, however, executing it from the source script will make it easier for us to record what we have done, and ultimately run an entire script, instead of entering commands one-by-one.

Next, lets create a folder in Documents or Desktop using the RStudio Files tab in pane 4 using the New Folder button. We will call the folder: R_data.

For the purposes of this exercise we want you to be in the directory "~/Documents/R_data". What if you weren’t? You can set your working directory using the setwd() command. Enter this command in your script, but don’t run this yet.

# This sets the working directory

setwd()

You may have guessed, you need to tell the setwd() command

what directory you want to set as your working directory. To do so, inside of the parentheses, open a set of quotes. Inside the quotes enter a ~/ which is the home directory for Linux, Mac and Windows. Next, use the Tab key, to take advantage of RStudio’s Tab-autocompletion method, to select Documents or Desktop depending on what you designated,

and the new folder R_data you just created. The path in your script should look like this:

# This sets the working directory

setwd("~/Documents/R_data")

When you run this command, the console repeats the command, but gives you no

output. Instead, you see the blank R prompt: >. Congratulations! Although it

seems small, knowing what your working directory is and being able to set your

working directory is the first step to analyzing your data.

Tip: Never use

setwd()Wait, what was the last 2 minutes about? Well, setting your working directory is something you need to do, you need to be very careful about using this as a step in your script. For example, what if your script is being on a computer that has a different directory structure? The top-level path in a Unix file system is root

/, but on Windows it is likelyC:\. This is one of several ways you might cause a script to break because a file path is configured differently than your script anticipates. R packages like here and file.path allow you to specify file paths is a way that is more operating system independent. See Jenny Bryan’s blog post for this and other R tips.

Exercise

Use

setwd()to set the working directory back to the project directory you created in the “Create and RStudio Project” section.Solution

In your script write

setwd("~/Documents/post-docs_genomics_r")orsetwd("~/Desktop/post-docs_genomics_r")and hit Control + Enter. You can also write that in the console. Notice that you need to provide an absolute path to the directory/folder you are going to set the working directory to.Alternatively you can use the Files tab in

pane 4to navigate to your project folder, when there click the More/Gear Icon then selectSet as Working Directory

Using functions in R, without needing to master them

A function in R (or any computing language) is a short

program that takes some input and returns some output. Functions may seem like an advanced topic (and they are), but you have already

used at least one function in R. getwd() is a function! The next sections will help you understand what is happening in

any R script.

Exercise: What do these functions do?

Try the following functions by writing them in your script. See if you can guess what they do, and make sure to add comments to your script about your assumed purpose.

dir()sessionInfo()date()Sys.time()Solution

dir()# Lists files in the working directorysessionInfo()# Gives the version of R and additional info including on attached packagesdate()# Gives the current dateSys.time()# Gives the current timeNotice: Commands are case sensitive!

You have hopefully noticed a pattern - an R function has three key properties:

- Functions have a name (e.g.

dir,getwd); note that functions are case sensitive! - Following the name, functions have a pair of

() - Inside the parentheses, a function may take 0 or more arguments

An argument may be a specific input for your function and/or may modify the

function’s behavior. For example the function round() will round a number

with a decimal:

# This will round a number to the nearest integer

round(3.14)

[1] 3

Getting help with function arguments

What if you wanted to round to one significant digit? round() can

do this, but you may first need to read the help to find out how. To see the help

(In R sometimes also called a “vignette”) enter a ? in front of the function

name:

?round()

The Help tab in pane 4 will show you information (often, too much information). You

will slowly learn how to read and make sense of help files. Checking the “Usage” or “Examples”

headings is often a good place to look first. If you look under “Arguments,” we

also see what arguments we can pass to this function to modify its behavior.

You can also see a function’s argument using the args() function:

args(round)

function (x, digits = 0)

NULL

round() takes two arguments, x, which is the number to be

rounded, and a

digits argument. The = sign indicates that a default (in this case 0) is

already set. Since x is not set, round() requires we provide it, in contrast

to digits where R will use the default value 0 unless you explicitly provide

a different value. We can explicitly set the digits parameter when we call the

function:

round(3.14159, digits = 2)

[1] 3.14

Or, R accepts what we call “positional arguments”, if you pass a function

arguments separated by commas, R assumes that they are in the order you saw

when we used args(). In the case below that means that x is 3.14159 and

digits is 2.

round(3.14159, 2)

[1] 3.14

Finally, what if you are using ? to get help for a function in a package not installed on your system, such as when you are running a script which has dependencies.

?geom_point()

will return an error:

Error in .helpForCall(topicExpr, parent.frame()) :

no methods for ‘geom_point’ and no documentation for it as a function

Use two question marks (i.e. ??geom_point()) and R will return

results from a search of the documentation for packages you have installed on your computer

in the “Help” tab. Finally, if you think there

should be a function, for example a statistical test, but you aren’t

sure what it is called in R, or what functions may be available, use

the help.search() function.

Exercise: Searching for R functions

Use

help.search()to find R functions for the following statistical functions. Remember to put your search query in quotes inside the function’s parentheses.

- Chi-Squared test

- Student-t test

- mixed linear model

Solution

While your search results may return several tests, we list a few you might find:

- Chi-Squared test:

stats::Chisquare- Student-t test:

stats::TDist- mixed linear model:

stats::lm.glm

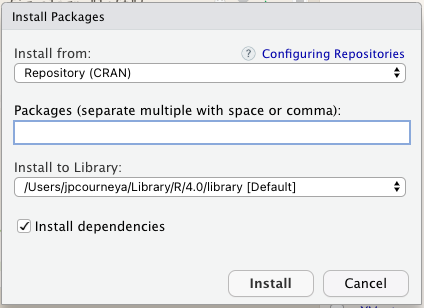

We will discuss more on where to look for the libraries and packages that contain functions you want to use. For now, be aware that two important ones are CRAN - the main repository for R, and Bioconductor - a popular repository for bioinformatics-related R packages which we will learn more about later.

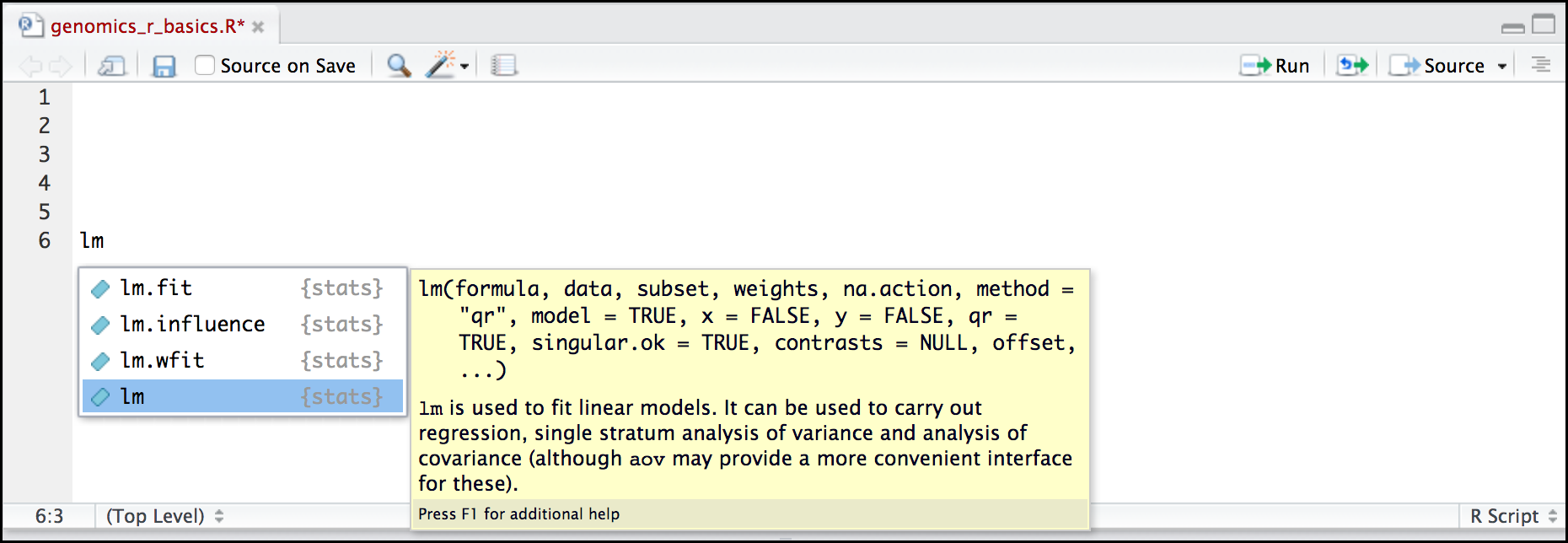

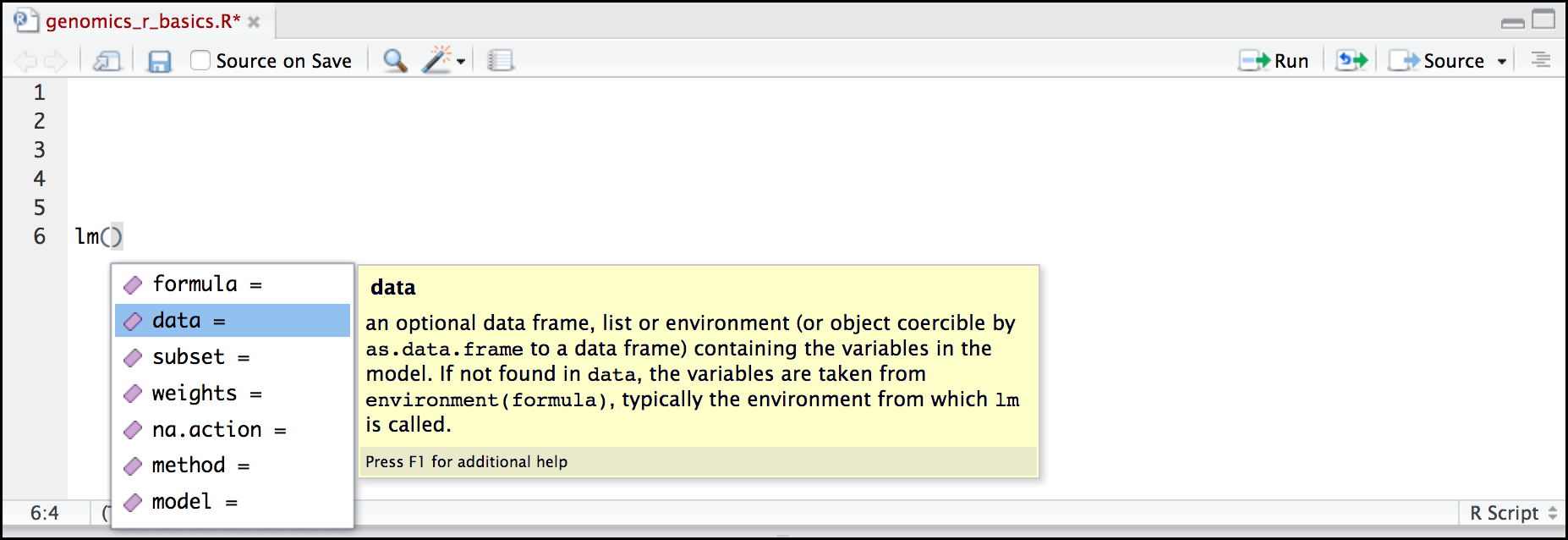

RStudio contextual help

Here is one last bonus we will mention about RStudio. It’s difficult to remember all of the arguments and definitions associated with a given function. When you start typing the name of a function and hit the Tab key, RStudio will display functions and associated help:

Once you type a function, hitting the Tab inside the parentheses will show you the function’s arguments and provide additional help for each of these arguments.

When you have no idea where to begin

If you don’t know what function or package you need to use CRAN Task Views is a specially maintained list of packages grouped into fields. This can be a good starting point.

When your code doesn’t work: seeking help from your peers

If you’re having trouble using a function, 9 times out of 10,

the answers you are seeking have already been answered on

Stack Overflow. You can search using

the [r] tag. You can also Google it which typically takes you to a Stack Overflow post.

If you can’t find the answer, there are a few useful functions to help you ask a question from your peers:

?dput

Will dump the data you’re working with into a format so that it can be copy and pasted by anyone else into their R session.

sessionInfo()

R version 4.0.4 (2021-02-15)

Platform: x86_64-apple-darwin17.0 (64-bit)

Running under: macOS Big Sur 10.16

Matrix products: default

BLAS: /Library/Frameworks/R.framework/Versions/4.0/Resources/lib/libRblas.dylib

LAPACK: /Library/Frameworks/R.framework/Versions/4.0/Resources/lib/libRlapack.dylib

locale:

[1] en_US.UTF-8/en_US.UTF-8/en_US.UTF-8/C/en_US.UTF-8/en_US.UTF-8

attached base packages:

[1] stats graphics grDevices utils datasets methods base

other attached packages:

[1] knitr_1.33

loaded via a namespace (and not attached):

[1] compiler_4.0.4 magrittr_2.0.1 tools_4.0.4 stringi_1.7.3 stringr_1.4.0

[6] xfun_0.24 evaluate_0.14

Will print out your current version of R, as well as any packages you have loaded. This can be useful for others to help reproduce and debug your issue.

Other ports of call

Key Points

R is a powerful, popular open-source scripting language

You can customize the layout of RStudio, and use the project feature to manage the files and packages used in your analysis

RStudio allows you to run R in an easy-to-use interface and makes it easy to find help

R Basics

Overview

Teaching: 60 min

Exercises: 20 minQuestions

What will these lessons not cover?

What are the basic features of the R language?

What are the most common objects in R?

Objectives

Be able to create the most common R objects including vectors

Understand that vectors have modes, which correspond to the type of data they contain

Be able to use arithmetic operators on R objects

Be able to retrieve (subset), name, or replace, values from a vector

Be able to use logical operators in a subsetting operation

Understand that lists can hold data of more than one mode and can be indexed

“The fantastic world of R awaits you” OR “Nobody wants to learn how to use R”

Before we begin this lesson, we want you to be clear on the goal of the workshop and these lessons. We believe that every learner can achieve competency with R.

You have reached competency when you find that you are able to use R to handle common analysis challenges in a reasonable amount of time (which includes time needed to look at learning materials, search for answers online, and ask colleagues for help). As you spend more time using R (there is no substitute for regular use and practice) you will find yourself gaining competency and even expertise. The more familiar you get, the more complex the analyses you will be able to carry out, with less frustration, and in less time - the fantastic world of R awaits you!

What these lessons will not teach you

Nobody wants to learn how to use R. People want to learn how to use R to analyze their own research questions!

Ok, maybe some folks learn R for R’s sake, but these lessons assume that you want to start analyzing genomic data as soon as possible. Given this, there are many valuable pieces of information about R that we simply won’t have time to cover. Hopefully, we will clear the hurdle of giving you just enough knowledge to be dangerous, which can be a high bar in R! We suggest you look into the additional learning materials in the tip box below.

Here are some R skills we will not cover in these lessons

-

How to create and work with R matrices and R lists

-

How to create and work with loops and conditional statements, and the “apply” family of functions (which are super useful, read more here)

-

How to do basic string manipulations (e.g. finding patterns in text using grep, replacing text)

-

How to plot using the default R graphic tools (we will cover plot creation, but will do so using the popular plotting package

ggplot2) -

How to use advanced R statistical functions

Tip: Where to learn more

The following are good resources for learning more about R. Some of them can be quite technical, but if you are a regular R user you may ultimately need this technical knowledge.

- R for Beginners: By Emmanuel Paradis and a great starting point

- The R Manuals: Maintained by the R project

- R contributed documentation: Also linked to the R project; importantly there are materials available in several languages

- R for Data Science: A wonderful collection by noted R educators and developers Garrett Grolemund and Hadley Wickham

- Practical Data Science for Stats: Not exclusively about R usage, but a nice collection of pre-prints on data science and applications for R

- Programming in R Software Carpentry lesson: There are several Software Carpentry lessons in R to choose from

Creating objects in R

Reminder

At this point you should be coding along in the “genomics_r_basics.R” script we created in the last episode. Writing your commands in the script (and commenting it) will make it easier to record what you did and why.

What might be called a variable in many languages is called an object in R.

To create an object you need:

-

a name (e.g. ‘a’)

-

a value (e.g. ‘1’)

-

the assignment operator (‘<-‘)

In your script, “genomics_r_basics.R”, using the R assignment operator ‘<-‘, assign ‘1’ to the object ‘a’ as shown. Remember to leave a comment in the line above (using the ‘#’) to explain what you are doing:

# this line creates the object 'a' and assigns it the value '1'

a <- 1

Next, run this line of code in your script. You can run a line of code by hitting the Run button that is just above the first line of your script in the header of the Source pane or you can use the appropriate shortcut:

- Windows execution shortcut: Ctrl+Enter

- Mac execution shortcut: Cmd(⌘)+Enter

To run multiple lines of code, you can highlight all the line you wish to run and then hit Run or use the shortcut key combo listed above.

In the RStudio ‘Console’ you should see:

a <- 1

>

The ‘Console’ will display lines of code run from a script and any outputs or status/warning/error messages (usually in red).

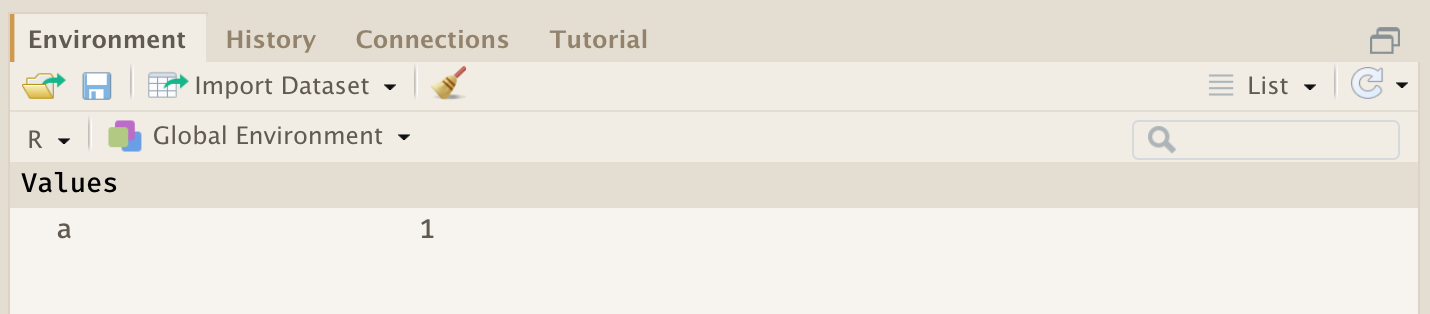

In the ‘Environment’ window you will also get a table:

The ‘Environment’ window allows you to keep track of the objects you have created in R.

Exercise: Create some objects in R

Create the following objects; give each object an appropriate name (your best guess at what name to use is fine):

- Create an object that has the value of number of pairs of human chromosomes

- Create an object that has a value of your favorite gene name

- Create an object that has this URL as its value: “ftp://ftp.ensemblgenomes.org/pub/bacteria/release-39/fasta/bacteria_5_collection/escherichia_coli_b_str_rel606/”

- Create an object that has the value of the number of chromosomes in a diploid human cell

Solution

Here as some possible answers to the challenge:

human_chr_number <- 23 gene_name <- 'pten' ensemble_url <- 'ftp://ftp.ensemblgenomes.org/pub/bacteria/release-39/fasta/bacteria_5_collection/escherichia_coli_b_str_rel606/' human_diploid_chr_num <- 2 * human_chr_number

Naming objects in R

Here are some important details about naming objects in R.

- Avoid spaces and special characters: Object names cannot contain spaces or the minus sign (

-). You can use ‘_’ to make names more readable. You should avoid using special characters in your object name (e.g. ! @ # . , etc.). Also, object names cannot begin with a number. - Use short, easy-to-understand names: You should avoid naming your objects using single letters (e.g. ‘n’, ‘p’, etc.). This is mostly to encourage you to use names that would make sense to anyone reading your code (a colleague, or even yourself a year from now). Also, avoiding excessively long names will make your code more readable.

- Avoid commonly used names: There are several names that may already have a definition in the R language (e.g. ‘mean’, ‘min’, ‘max’). One clue that a name already has meaning is that if you start typing a name in RStudio and it gets a colored highlight or RStudio gives you a suggested autocompletion you have chosen a name that has a reserved meaning.

- Use the recommended assignment operator: In R, we use ‘<- ‘ as the

preferred assignment operator. ‘=’ works too, but is most commonly used in

passing arguments to functions (more on functions later). There is a shortcut

for the R assignment operator:

- Windows execution shortcut: Alt+-

- Mac execution shortcut: Option+-

There are a few more suggestions about naming and style you may want to learn more about as you write more R code. There are several “style guides” that have advice, and one to start with is the tidyverse R style guide.

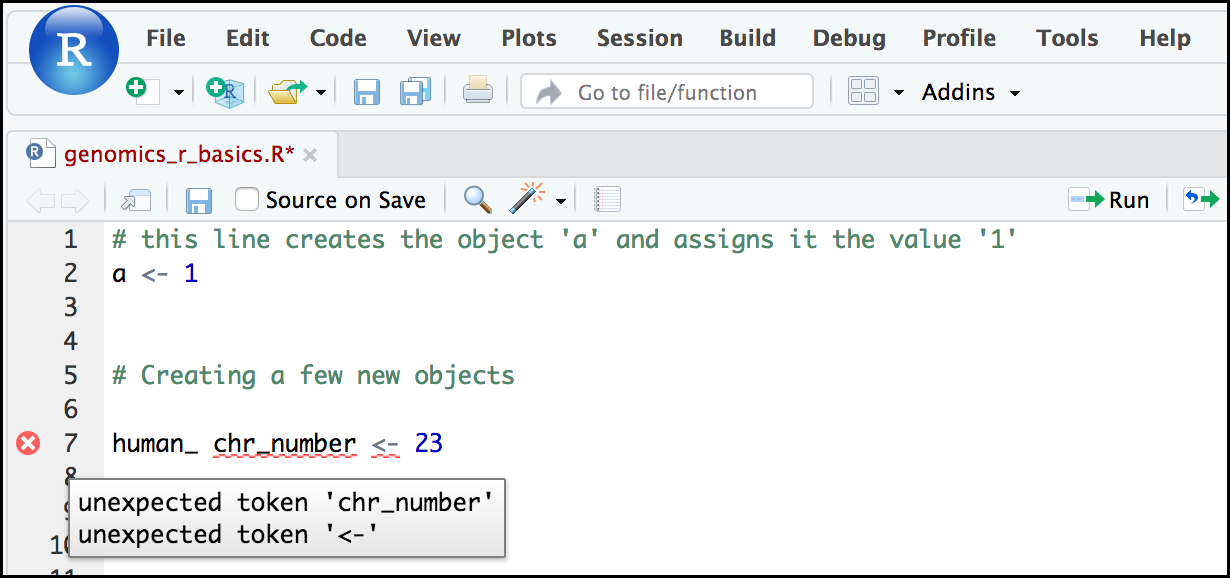

Tip: Pay attention to warnings in the script console

If you enter a line of code in your script that contains an error, RStudio may give you an error message and underline this mistake. Sometimes these messages are easy to understand, but often the messages may need some figuring out. Paying attention to these warnings will help you avoid mistakes. In the example below, our object name has a space, which is not allowed in R. The error message does not say this directly, but R is “not sure” about how to assign the name to “human_ chr_number” when the object name we want is “human_chr_number”.

Reassigning object names or deleting objects

Once an object has a value, you can change that value by overwriting it. R will not give you a warning or error if you overwriting an object, which may or may not be a good thing depending on how you look at it.

# gene_name has the value 'pten' or whatever value you used in the challenge.

# We will now assign the new value 'tp53'

gene_name <- 'tp53'

You can also remove an object from R’s memory entirely. The rm() function

will delete the object.

# delete the object 'gene_name'

rm(gene_name)

If you run a line of code that has only an object name, R will normally display the contents of that object. In this case, we are told the object no longer exists.

Error: object 'gene_name' not found

Understanding object data types (modes)

In R, every object has two properties:

- Length: How many distinct values are held in that object

- Mode: What is the classification (type) of that object.

We will get to the “length” property later in the lesson. The “mode” property corresponds to the type of data an object represents. The most common modes you will encounter in R are:

| Mode (abbreviation) | Type of data |

|---|---|

| Numeric (num) | Numbers such floating point/decimals (1.0, 0.5, 3.14), there are also more specific numeric types (dbl - Double, int - Integer). These differences are not relevant for most beginners and pertain to how these values are stored in memory |

| Character (chr) | A sequence of letters/numbers in single ‘’ or double “ “ quotes |

| Logical | Boolean values - TRUE or FALSE |

There are a few other modes (i.e. “complex”, “raw” etc.) but these are the three we will work with in this lesson.

Data types are familiar in many programming languages, but also in natural language where we refer to them as the parts of speech, e.g. nouns, verbs, adverbs, etc. Once you know if a word - perhaps an unfamiliar one - is a noun, you can probably guess you can count it and make it plural if there is more than one (e.g. 1 Tuatara, or 2 Tuataras). If something is a adjective, you can usually change it into an adverb by adding “-ly” (e.g. jejune vs. jejunely). Depending on the context, you may need to decide if a word is in one category or another (e.g “cut” may be a noun when it’s on your finger, or a verb when you are preparing vegetables). These concepts have important analogies when working with R objects.

Exercise: Create objects and check their modes

Create the following objects in R, then use the

mode()function to verify their modes. Try to guess what the mode will be before you look at the solution

chromosome_name <- 'chr02'od_600_value <- 0.47chr_position <- '1001701'spock <- TRUEpilot <- EarhartSolution

Error in eval(expr, envir, enclos): object 'Earhart' not foundmode(chromosome_name)[1] "character"mode(od_600_value)[1] "numeric"mode(chr_position)[1] "character"mode(spock)[1] "logical"mode(pilot)Error in mode(pilot): object 'pilot' not found

Notice from the solution that even if a series of numbers is given as a value

R will consider them to be in the “character” mode if they are enclosed as

single or double quotes. Also, notice that you cannot take a string of alphanumeric

characters (e.g. Earhart) and assign as a value for an object. In this case,

R looks for an object named Earhart but since there is no object, no assignment can

be made. If Earhart did exist, then the mode of pilot would be whatever

the mode of Earhart was originally. If we want to create an object

called pilot that was the name “Earhart”, we need to enclose

Earhart in quotation marks.

pilot <- "Earhart"

mode(pilot)

[1] "character"

Mathematical and functional operations on objects

Once an object exists (which by definition also means it has a mode), R can appropriately manipulate that object. For example, objects of the numeric modes can be added, multiplied, divided, etc. R provides several mathematical (arithmetic) operators including:

| Operator | Description |

|---|---|

| + | addition |

| - | subtraction |

| * | multiplication |

| / | division |

| ^ or ** | exponentiation |

| a%/%b | integer division (division where the remainder is discarded) |

| a%%b | modulus (returns the remainder after division) |

These can be used with literal numbers:

(1 + (5 ** 0.5))/2

[1] 1.618034

and importantly, can be used on any object that evaluates to (i.e. interpreted by R) a numeric object:

# multiply the object 'human_chr_number' by 2

human_chr_number * 2

[1] 46

Exercise: Compute the golden ratio

One approximation of the golden ratio (φ) can be found by taking the sum of 1 and the square root of 5, and dividing by 2 as in the example above. Compute the golden ratio to 3 digits of precision using the

sqrt()andround()functions. Hint: remember theround()function can take 2 arguments.Solution

round((1 + sqrt(5))/2, digits = 3)[1] 1.618Notice that you can place one function inside of another.

Vectors

Vectors are probably the most commonly used object type in R.

A vector is a collection of values that are all of the same type (numbers, characters, etc.).

One of the most common ways to create a vector is to use the c() function - the “concatenate” or “combine” function. Inside the function you may enter one or more values; for multiple values, separate each value with a comma:

# Create the SNP gene name vector

snp_genes <- c("OXTR", "ACTN3", "AR", "OPRM1")

Vectors always have a mode and a length.

You can check these with the mode() and length() functions respectively.

A useful function that gives both of these pieces of information is the

str() (structure) function.

# Check the mode, length, and structure of 'snp_genes'

mode(snp_genes)

[1] "character"

length(snp_genes)

[1] 4

str(snp_genes)

chr [1:4] "OXTR" "ACTN3" "AR" "OPRM1"

Vectors are quite important in R. Another data type that we will work with later in this lesson, data frames, are collections of vectors. What we learn here about vectors will pay off even more when we start working with data frames.

Creating and subsetting vectors

Let’s create a few more vectors to play around with:

# Some interesting human SNPs

# while accuracy is important, typos in the data won't hurt you here

snps <- c('rs53576', 'rs1815739', 'rs6152', 'rs1799971')

snp_chromosomes <- c('3', '11', 'X', '6')

snp_positions <- c(8762685, 66560624, 67545785, 154039662)

Once we have vectors, one thing we may want to do is specifically retrieve one or more values from our vector. To do so, we use bracket notation. We type the name of the vector followed by square brackets. In those square brackets we place the index (e.g. a number) in that bracket as follows:

# get the 3rd value in the snp_genes vector

snp_genes[3]

[1] "AR"

In R, every item your vector is indexed, starting from the first item (1) through to the final number of items in your vector. You can also retrieve a range of numbers:

# get the 1st through 3rd value in the snp_genes vector

snp_genes[1:3]

[1] "OXTR" "ACTN3" "AR"

If you want to retrieve several (but not necessarily sequential) items from a vector, you pass a vector of indices; a vector that has the numbered positions you wish to retrieve.

# get the 1st, 3rd, and 4th value in the snp_genes vector

snp_genes[c(1, 3, 4)]

[1] "OXTR" "AR" "OPRM1"

There are additional (and perhaps less commonly used) ways of subsetting a vector (see these examples). Also, several of these subsetting expressions can be combined:

# get the 1st through the 3rd value, and 4th value in the snp_genes vector

# yes, this is a little silly in a vector of only 4 values.

snp_genes[c(1:3,4)]

[1] "OXTR" "ACTN3" "AR" "OPRM1"

Adding to, removing, or replacing values in existing vectors

Once you have an existing vector, you may want to add a new item to it. To do

so, you can use the c() function again to add your new value:

# add the gene 'CYP1A1' and 'APOA5' to our list of snp genes

# this overwrites our existing vector

snp_genes <- c(snp_genes, "CYP1A1", "APOA5")

We can verify that “snp_genes” contains the new gene entry

snp_genes

[1] "OXTR" "ACTN3" "AR" "OPRM1" "CYP1A1" "APOA5"

Using a negative index will return a version of a vector with that index’s value removed:

snp_genes[-6]

[1] "OXTR" "ACTN3" "AR" "OPRM1" "CYP1A1"

We can remove that value from our vector by overwriting it with this expression:

snp_genes <- snp_genes[-6]

snp_genes

[1] "OXTR" "ACTN3" "AR" "OPRM1" "CYP1A1"

We can also explicitly rename or add a value to our index using double bracket notation:

snp_genes[7]<- "APOA5"

snp_genes

[1] "OXTR" "ACTN3" "AR" "OPRM1" "CYP1A1" NA "APOA5"

Notice in the operation above that R inserts an NA value to extend our vector so that the gene “APOA5” is an index 7. This may be a good or not-so-good thing depending on how you use this.

Exercise: Examining and subsetting vectors

Answer the following questions to test your knowledge of vectors

Which of the following are true of vectors in R?

A) All vectors have a mode or a length

B) All vectors have a mode and a length

C) Vectors may have different lengths

D) Items within a vector may be of different modes

E) You can use thec()to one or more items to an existing vector

F) You can use thec()to add a vector to an exiting vectorSolution

A) False - Vectors have both of these properties

B) True

C) True

D) False - Vectors have only one mode (e.g. numeric, character); all items in

a vector must be of this mode. E) True

F) True

Logical Subsetting

There is one last set of cool subsetting capabilities we want to introduce. It is possible within R to retrieve items in a vector based on a logical evaluation or numerical comparison. For example, let’s say we wanted get all of the SNPs in our vector of SNP positions that were greater than 100,000,000. We could index using the ‘>’ (greater than) logical operator:

snp_positions[snp_positions > 100000000]

[1] 154039662

In the square brackets you place the name of the vector followed by the comparison operator and (in this case) a numeric value. Some of the most common logical operators you will use in R are:

| Operator | Description |

|---|---|

| < | less than |

| <= | less than or equal to |

| > | greater than |

| >= | greater than or equal to |

| == | exactly equal to |

| != | not equal to |

| !x | not x |

| a | b | a or b |

| a & b | a and b |

The magic of programming

The reason why the expression

snp_positions[snp_positions > 100000000]works can be better understood if you examine what the expression “snp_positions > 100000000” evaluates to:snp_positions > 100000000[1] FALSE FALSE FALSE TRUEThe output above is a logical vector, the 4th element of which is TRUE. When you pass a logical vector as an index, R will return the true values:

snp_positions[c(FALSE, FALSE, FALSE, TRUE)][1] 154039662If you have never coded before, this type of situation starts to expose the “magic” of programming. We mentioned before that in the bracket notation you take your named vector followed by brackets which contain an index: named_vector[index]. The “magic” is that the index needs to evaluate to a number. So, even if it does not appear to be an integer (e.g. 1, 2, 3), as long as R can evaluate it, we will get a result. That our expression

snp_positions[snp_positions > 100000000]evaluates to a number can be seen in the following situation. If you wanted to know which index (1, 2, 3, or 4) in our vector of SNP positions was the one that was greater than 100,000,000?We can use the

which()function to return the indices of any item that evaluates as TRUE in our comparison:which(snp_positions > 100000000)[1] 4Why this is important

Often in programming we will not know what inputs and values will be used when our code is executed. Rather than put in a pre-determined value (e.g 100000000) we can use an object that can take on whatever value we need. So for example:

snp_marker_cutoff <- 100000000 snp_positions[snp_positions > snp_marker_cutoff][1] 154039662Ultimately, it’s putting together flexible, reusable code like this that gets at the “magic” of programming!

A few final vector tricks

Finally, there are a few other common retrieve or replace operations you may

want to know about. First, you can check to see if any of the values of your

vector are missing (i.e. are NA). Missing data will get a more detailed treatment later,

but the is.NA() function will return a logical vector, with TRUE for any NA

value:

# current value of 'snp_genes':

# chr [1:7] "OXTR" "ACTN3" "AR" "OPRM1" "CYP1A1" NA "APOA5"

is.na(snp_genes)

[1] FALSE FALSE FALSE FALSE FALSE TRUE FALSE

Sometimes, you may wish to find out if a specific value (or several values) is

present a vector. You can do this using the comparison operator %in%, which

will return TRUE for any value in your collection that is in

the vector you are searching:

# current value of 'snp_genes':

# chr [1:7] "OXTR" "ACTN3" "AR" "OPRM1" "CYP1A1" NA "APOA5"

# test to see if "ACTN3" or "APO5A" is in the snp_genes vector

# if you are looking for more than one value, you must pass this as a vector

c("ACTN3","APOA5") %in% snp_genes

[1] TRUE TRUE

Review Exercise 1

What data types/modes are the following vectors?

a.

snps

b.snp_chromosomes

c.snp_positionsSolution

typeof(snps)[1] "character"typeof(snp_chromosomes)[1] "character"typeof(snp_positions)[1] "double"

Review Exercise 2

Add the following values to the specified vectors:

a. To the

snpsvector add: ‘rs662799’

b. To thesnp_chromosomesvector add: 11

c. To thesnp_positionsvector add: 116792991Solution

snps <- c(snps, 'rs662799') snps[1] "rs53576" "rs1815739" "rs6152" "rs1799971" "rs662799"snp_chromosomes <- c(snp_chromosomes, "11") # did you use quotes? snp_chromosomes[1] "3" "11" "X" "6" "11"snp_positions <- c(snp_positions, 116792991) snp_positions[1] 8762685 66560624 67545785 154039662 116792991

Review Exercise 3

Make the following change to the

snp_genesvector:Hint: Your vector should look like this in ‘Environment’:

chr [1:7] "OXTR" "ACTN3" "AR" "OPRM1" "CYP1A1" NA "APOA5". If not recreate the vector by running this expression:snp_genes <- c("OXTR", "ACTN3", "AR", "OPRM1", "CYP1A1", NA, "APOA5")a. Create a new version of

snp_genesthat does not contain CYP1A1 and then

b. Add 2 NA values to the end ofsnp_genesSolution

snp_genes <- snp_genes[-5] snp_genes <- c(snp_genes, NA, NA) snp_genes[1] "OXTR" "ACTN3" "AR" "OPRM1" NA "APOA5" NA NA

Review Exercise 4

Using indexing, create a new vector named

combinedthat contains:

- The the 1st value in

snp_genes- The 1st value in

snps- The 1st value in

snp_chromosomes- The 1st value in

snp_positionsSolution

combined <- c(snp_genes[1], snps[1], snp_chromosomes[1], snp_positions[1]) combined[1] "OXTR" "rs53576" "3" "8762685"

Review Exercise 5

What type of data is

combined?Solution

typeof(combined)[1] "character"

Bonus material: Lists

Lists are quite useful in R, but we won’t be using them in the genomics lessons. That said, you may come across lists in the way that some bioinformatics programs may store and/or return data to you. One of the key attributes of a list is that, unlike a vector, a list may contain data of more than one mode. Learn more about creating and using lists using this nice tutorial. In this one example, we will create a named list and show you how to retrieve items from the list.

# Create a named list using the 'list' function and our SNP examples

# Note, for easy reading we have placed each item in the list on a separate line

# Nothing special about this, you can do this for any multiline commands

# To run this command, make sure the entire command (all 4 lines) are highlighted

# before running

# Note also, as we are doing all this inside the list() function use of the

# '=' sign is good style

snp_data <- list(genes = snp_genes,

refference_snp = snps,

chromosome = snp_chromosomes,

position = snp_positions)

# Examine the structure of the list

str(snp_data)

List of 4

$ genes : chr [1:8] "OXTR" "ACTN3" "AR" "OPRM1" ...

$ refference_snp: chr [1:5] "rs53576" "rs1815739" "rs6152" "rs1799971" ...

$ chromosome : chr [1:5] "3" "11" "X" "6" ...

$ position : num [1:5] 8.76e+06 6.66e+07 6.75e+07 1.54e+08 1.17e+08

To get all the values for the position object in the list, we use the $ notation:

# return all the values of position object

snp_data$position

[1] 8762685 66560624 67545785 154039662 116792991

To get the first value in the position object, use the [] notation to index:

# return first value of the position object

snp_data$position[1]

[1] 8762685

Key Points

Effectively using R is a journey of months or years. Still you don’t have to be an expert to use R and you can start using and analyzing your data with with about a day’s worth of training

It is important to understand how data are organized by R in a given object type and how the mode of that type (e.g. numeric, character, logical, etc.) will determine how R will operate on that data.

Working with vectors effectively prepares you for understanding how data are organized in R.

R Basics continued - factors and data frames

Overview

Teaching: 60 min

Exercises: 30 minQuestions

How do I get started with tabular data (e.g. spreadsheets) in R?

What are some best practices for reading data into R?

How do I save tabular data generated in R?

Objectives

Explain the basic principle of tidy datasets

Be able to load a tabular dataset using base R functions

Be able to determine the structure of a data frame including its dimensions and the datatypes of variables

Be able to subset/retrieve values from a data frame

Understand how R may coerce data into different modes

Be able to change the mode of an object

Understand that R uses factors to store and manipulate categorical data

Be able to manipulate a factor, including subsetting and reordering

Be able to apply an arithmetic function to a data frame

Be able to coerce the class of an object (including variables in a data frame)

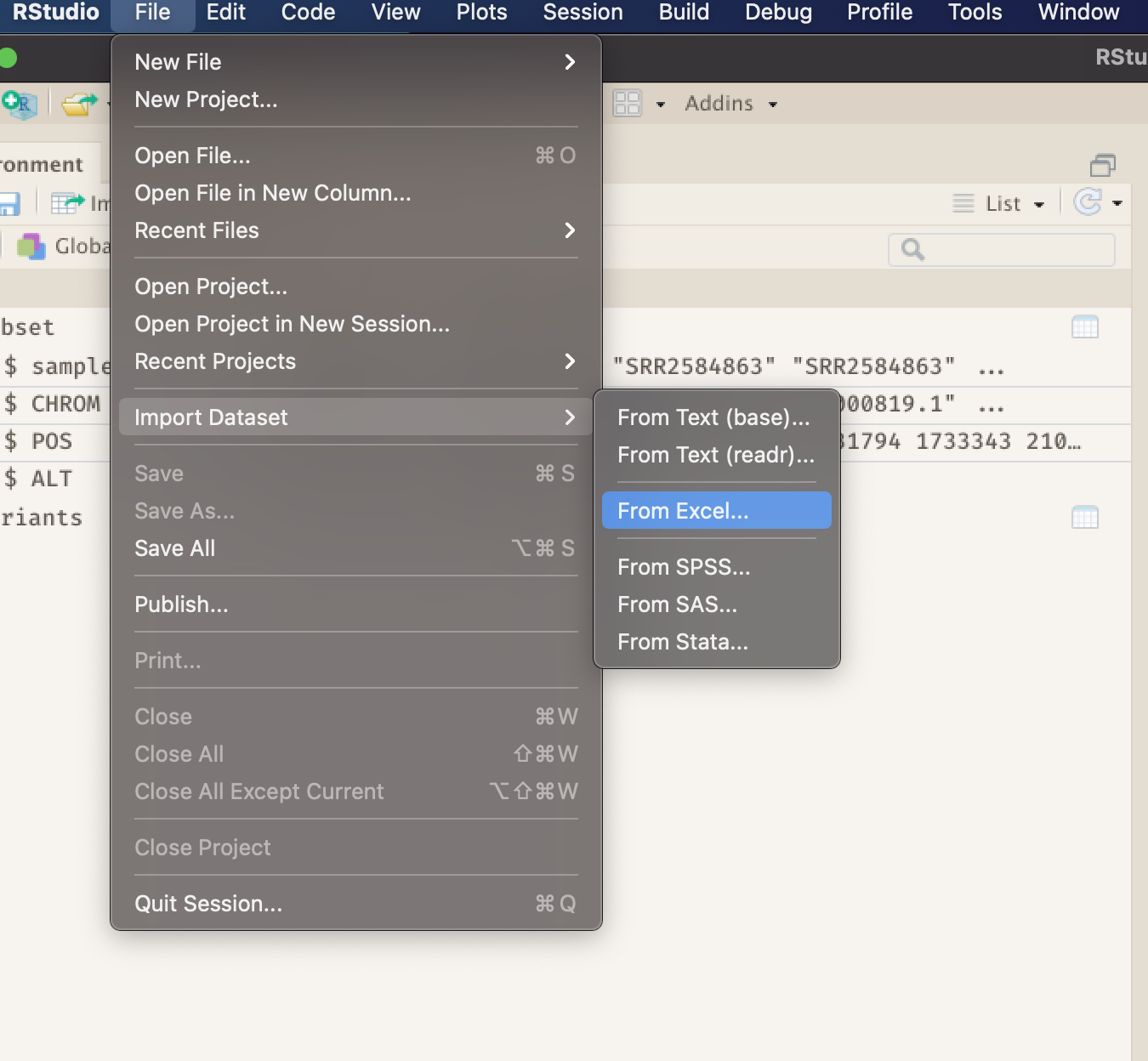

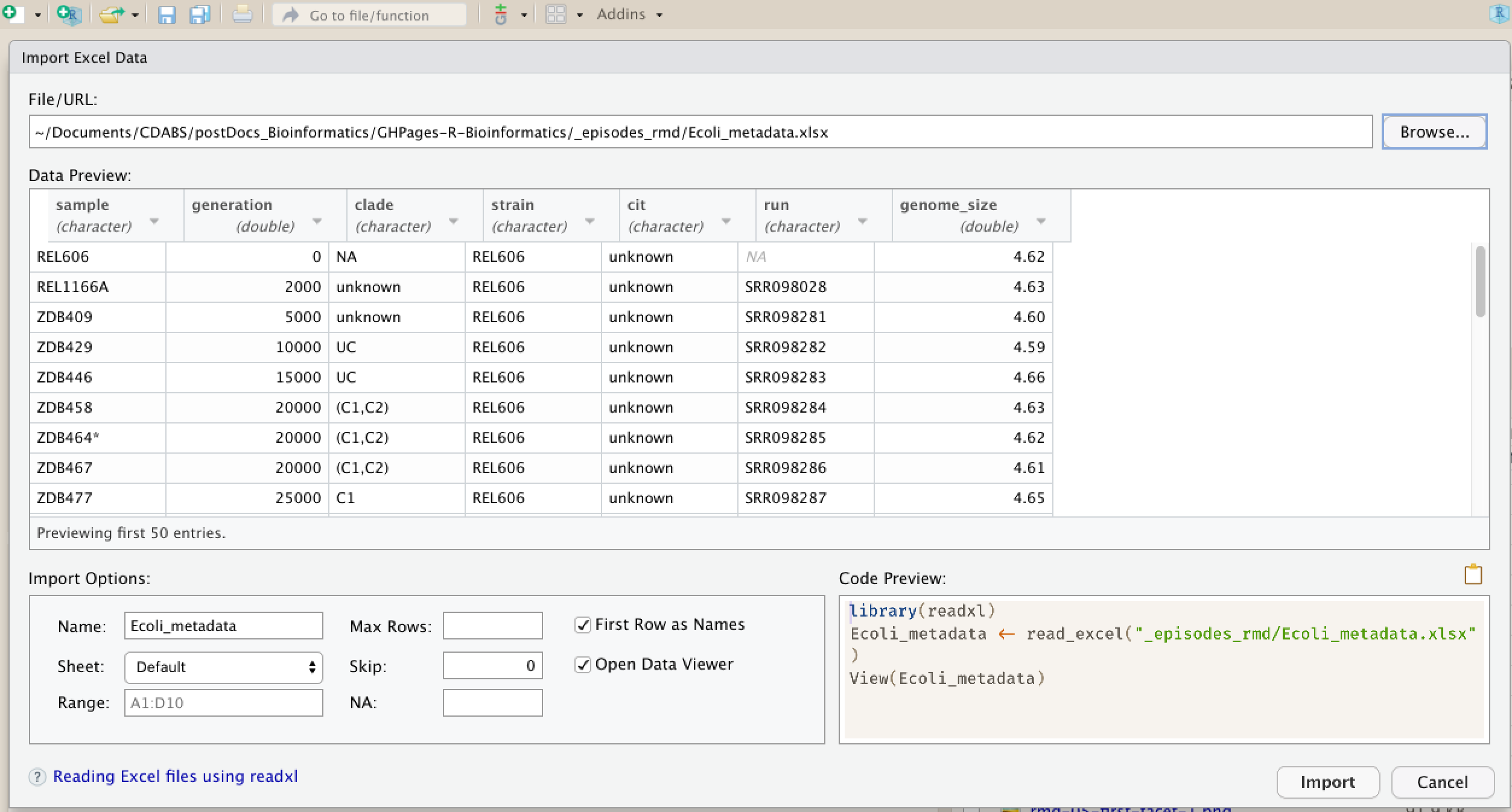

Be able to import data from Excel

Be able to save a data frame as a delimited file

Working with spreadsheets (tabular data)

A substantial amount of the data we work with in genomics will be tabular data, this is data arranged in rows and columns - also known as spreadsheets. We could write a whole lesson on how to work with spreadsheets effectively (actually we did). For our purposes, we want to remind you of a few principles before we work with our first set of example data:

1) Keep raw data separate from analyzed data

This is principle number one because if you can’t tell which files are the original raw data, you risk making some serious mistakes (e.g. drawing conclusion from data which have been manipulated in some unknown way). This is a redundancy but important to review!

2) Keep spreadsheet data Tidy

The simplest principle of Tidy data is that we have one row in our spreadsheet for each observation or sample, and one column for every variable that we measure or report on. As simple as this sounds, it’s very easily violated. Most data scientists agree that significant amounts of their time is spent tidying data for analysis. Read more about data organization in our lesson and in this paper.

3) Trust but verify

Finally, while you don’t need to be paranoid about data, you should have a plan for how you will prepare it for analysis. This a focus of this lesson. You probably already have a lot of intuition, expectations, assumptions about your data - the range of values you expect, how many values should have been recorded, etc. Of course, as the data get larger our human ability to keep track will start to fail (and yes, it can fail for small data sets too). R will help you to examine your data so that you can have greater confidence in your analysis, and its reproducibility.

Tip: Keeping you raw data separate

When you work with data in R, you are not changing the original file you loaded that data from. This is different than (for example) working with a spreadsheet program where changing the value of the cell leaves you one “save”-click away from overwriting the original file. You have to purposely use a writing function (e.g.

write.csv()) to save data loaded into R. In that case, be sure to save the modified data into a new file. More on this later in the lesson.

Importing tabular data into R

There are several ways to import data into R. For our purpose here, we will

focus on using the tools every R installation comes with (so called “base” R) to import a comma-delimited file containing the results of a variant calling workflow.

We will need to load the sheet using a function called read.csv().

Exercise: Review the arguments of the

read.csv()functionBefore using the

read.csv()function, use R’s help feature to answer the following questions.Hint: Entering ‘?’ before the function name and then running that line will bring up the help documentation. Also, when reading this particular help be careful to pay attention to the ‘read.csv’ expression under the ‘Usage’ heading. Other answers will be in the ‘Arguments’ heading.

A) What is the default parameter for ‘header’ in the

read.csv()function?B) What argument would you have to change to read a file that was delimited by semicolons (;) rather than commas?

C) What argument would you have to change to read file in which numbers used commas for decimal separation (i.e. 1,00)?

D) What argument would you have to change to read in only the first 10,000 rows of a very large file?

Solution

A) The

read.csv()function has the argument ‘header’ set to TRUE by default, this means the function always assumes the first row is header information, (i.e. column names)B) The

read.csv()function has the argument ‘sep’ set to “,”. This means the function assumes commas are used as delimiters, as you would expect. Changing this parameter (e.g.sep=";") would now interpret semicolons as delimiters.C) Although it is not listed in the

read.csv()usage,read.csv()is a “version” of the functionread.table()and accepts all its arguments. If you setdec=","you could change the decimal operator. We’d probably assume the delimiter is some other character.D) You can set

nrowto a numeric value (e.g.nrow=10000) to choose how many rows of a file you read in. This may be useful for very large files where not all the data is needed to test some data cleaning steps you are applying.Hopefully, this exercise gets you thinking about using the provided help documentation in R. There are many arguments that exist, but which we wont have time to cover. Look here to get familiar with functions you use frequently, you may be surprised at what you find they can do.

Now, let’s read in the file combined_tidy_vcf.csv which will be located in

~/Documents/post-docs_genomics_r/data/. Call this data variants. The

first argument to pass to our read.csv() function is the file path for our

data. The file path must be in quotes and now is a good time to remember to

use tab autocompletion. If you use tab autocompletion you avoid typos and

errors in file paths. Use it!

## read in a CSV file and save it as 'variants'

# assuming youre in the project home directory too!

variants <- read.csv("data/combined_tidy_vcf.csv")

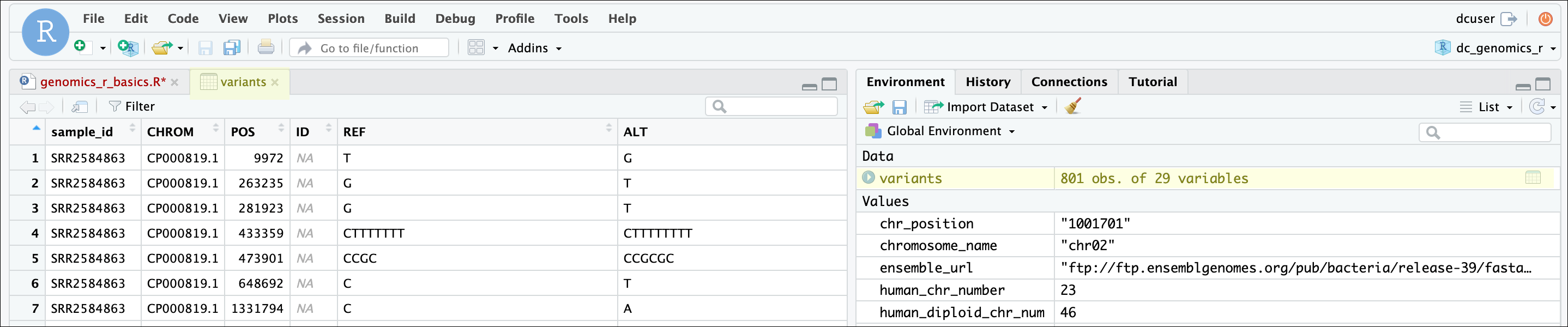

One of the first things you should notice is that in the Environment window,

you have the variants object, listed as 801 obs. (observations/rows)

of 29 variables (columns). Double-clicking on the name of the object will open

a view of the data in a new tab.

Summarizing, subsetting, and determining the structure of a data frame.

A data frame is the standard way in R to store tabular data. A data fame could also be thought of as a collection of vectors, all of which have the same length. Using only two functions, we can learn a lot about out data frame including some summary statistics as well as well as the “structure” of the data frame. Let’s examine what each of these functions can tell us:

## get summary statistics on a data frame

summary(variants)

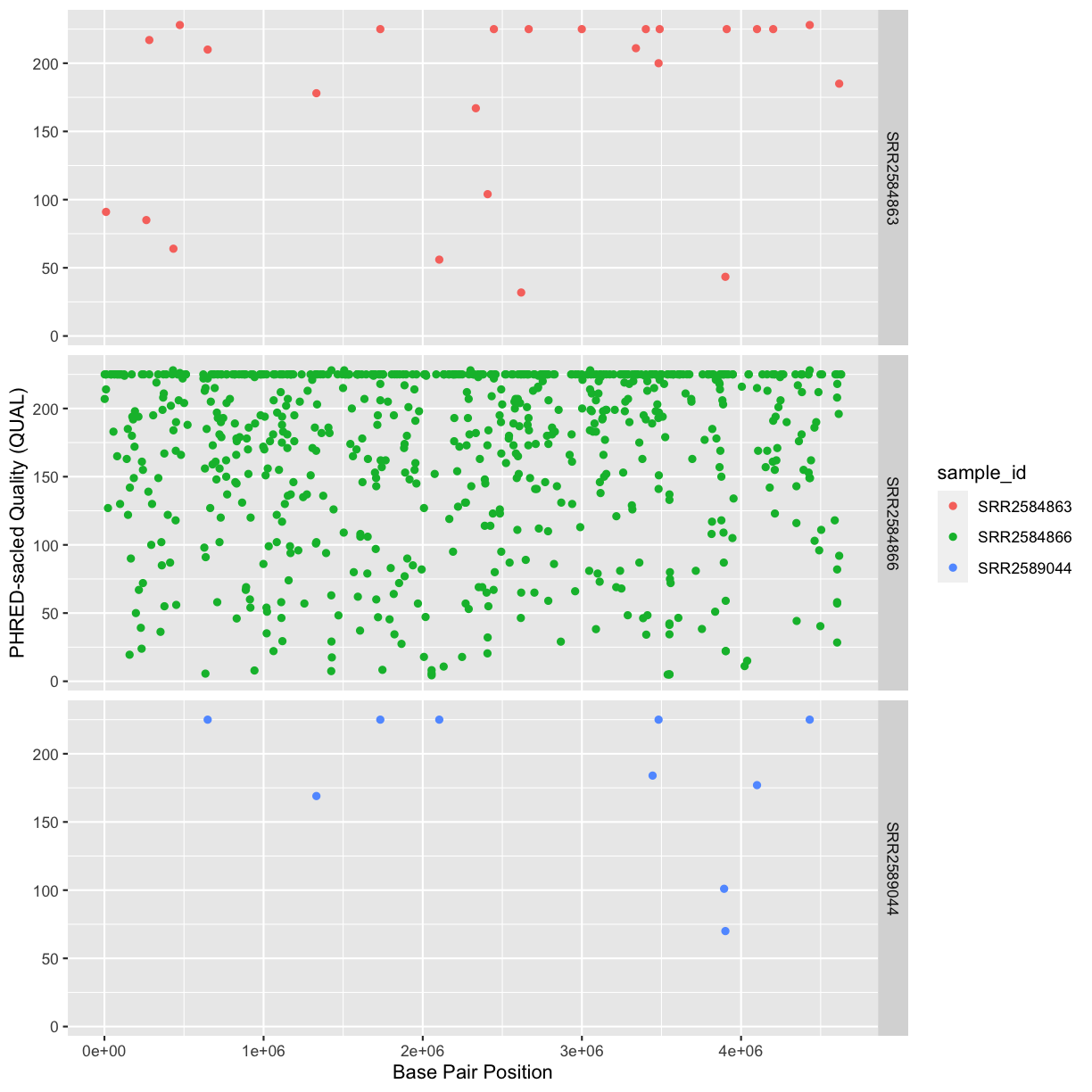

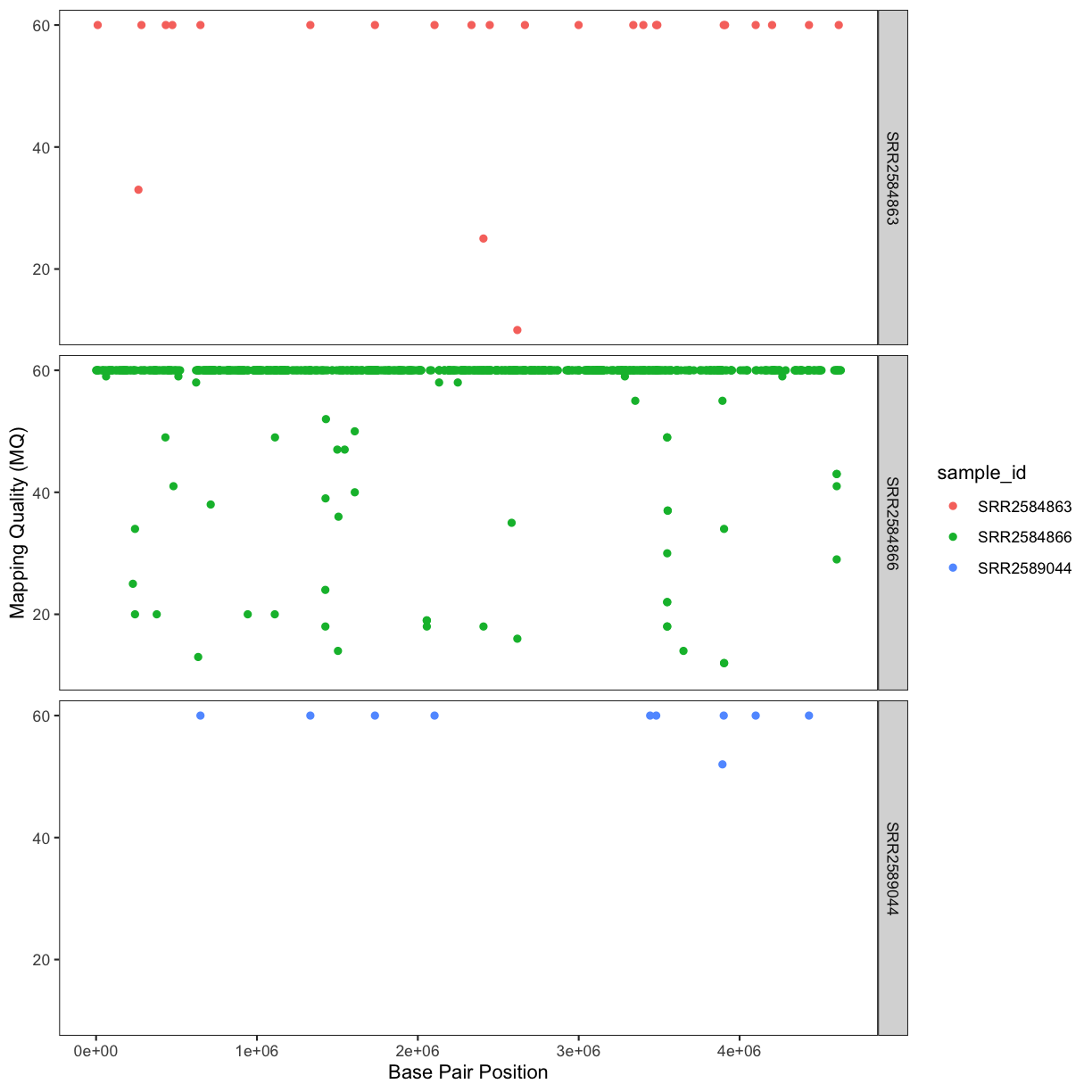

sample_id CHROM POS ID

Length:801 Length:801 Min. : 1521 Mode:logical

Class :character Class :character 1st Qu.:1115970 NA's:801

Mode :character Mode :character Median :2290361

Mean :2243682

3rd Qu.:3317082

Max. :4629225

REF ALT QUAL FILTER

Length:801 Length:801 Min. : 4.385 Mode:logical

Class :character Class :character 1st Qu.:139.000 NA's:801

Mode :character Mode :character Median :195.000

Mean :172.276

3rd Qu.:225.000

Max. :228.000

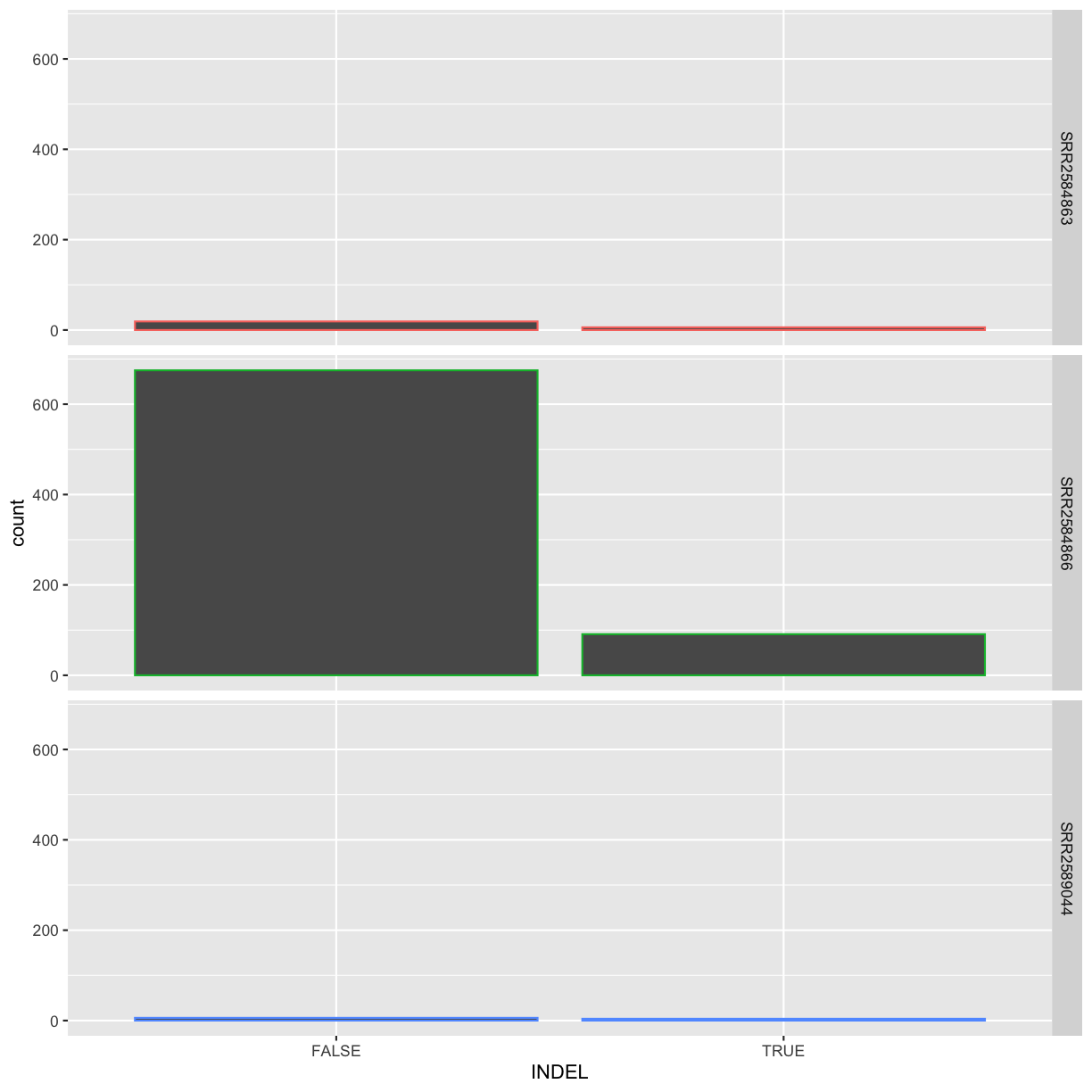

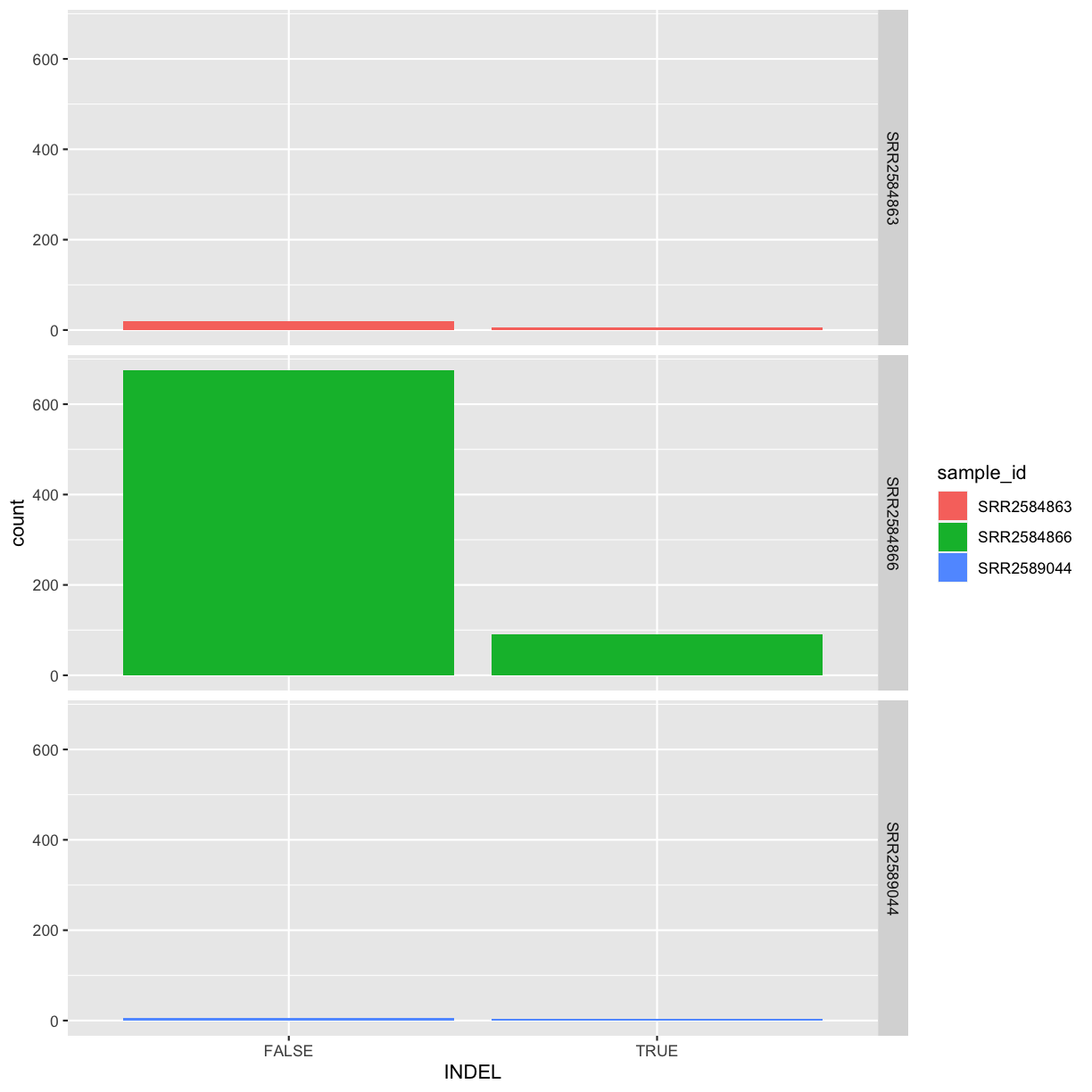

INDEL IDV IMF DP

Mode :logical Min. : 2.000 Min. :0.5714 Min. : 2.00

FALSE:700 1st Qu.: 7.000 1st Qu.:0.8824 1st Qu.: 7.00

TRUE :101 Median : 9.000 Median :1.0000 Median :10.00

Mean : 9.396 Mean :0.9219 Mean :10.57

3rd Qu.:11.000 3rd Qu.:1.0000 3rd Qu.:13.00

Max. :20.000 Max. :1.0000 Max. :79.00

NA's :700 NA's :700

VDB RPB MQB BQB

Min. :0.0005387 Min. :0.0000 Min. :0.0000 Min. :0.1153

1st Qu.:0.2180410 1st Qu.:0.3776 1st Qu.:0.1070 1st Qu.:0.6963

Median :0.4827410 Median :0.8663 Median :0.2872 Median :0.8615

Mean :0.4926291 Mean :0.6970 Mean :0.5330 Mean :0.7784

3rd Qu.:0.7598940 3rd Qu.:1.0000 3rd Qu.:1.0000 3rd Qu.:1.0000

Max. :0.9997130 Max. :1.0000 Max. :1.0000 Max. :1.0000

NA's :773 NA's :773 NA's :773

MQSB SGB MQ0F ICB

Min. :0.01348 Min. :-0.6931 Min. :0.00000 Mode:logical

1st Qu.:0.95494 1st Qu.:-0.6762 1st Qu.:0.00000 NA's:801

Median :1.00000 Median :-0.6620 Median :0.00000

Mean :0.96428 Mean :-0.6444 Mean :0.01127

3rd Qu.:1.00000 3rd Qu.:-0.6364 3rd Qu.:0.00000

Max. :1.01283 Max. :-0.4536 Max. :0.66667

NA's :48

HOB AC AN DP4 MQ

Mode:logical Min. :1 Min. :1 Length:801 Min. :10.00

NA's:801 1st Qu.:1 1st Qu.:1 Class :character 1st Qu.:60.00

Median :1 Median :1 Mode :character Median :60.00

Mean :1 Mean :1 Mean :58.19

3rd Qu.:1 3rd Qu.:1 3rd Qu.:60.00

Max. :1 Max. :1 Max. :60.00

Indiv gt_PL gt_GT gt_GT_alleles

Length:801 Length:801 Min. :1 Length:801

Class :character Class :character 1st Qu.:1 Class :character

Mode :character Mode :character Median :1 Mode :character

Mean :1

3rd Qu.:1

Max. :1

Our data frame had 29 variables, so we get 29 fields that summarize the data.

The QUAL, IMF, and VDB variables (and several others) are

numerical data and so you get summary statistics on the min and max values for

these columns, as well as mean, median, and interquartile ranges. Many of the

other variables (e.g. sample_id) are treated as characters data (more on this

in a bit).

There is a lot to work with, so we will subset the first three columns into a

new data frame using the data.frame() function.

## put the first three columns and column 6 of variants into a new data frame called subset

subset<- variants[,c(1:3,6)]

Now, let’s use the str() (structure) function to look a little more closely

at how data frames work:

## get the structure of a data frame

str(subset)

'data.frame': 801 obs. of 4 variables:

$ sample_id: chr "SRR2584863" "SRR2584863" "SRR2584863" "SRR2584863" ...

$ CHROM : chr "CP000819.1" "CP000819.1" "CP000819.1" "CP000819.1" ...

$ POS : int 9972 263235 281923 433359 473901 648692 1331794 1733343 2103887 2333538 ...

$ ALT : chr "G" "T" "T" "CTTTTTTTT" ...

Ok, thats a lot to unpack! Some things to notice.

- the object type

data.frameis displayed in the first row along with its dimensions, in this case 801 observations (rows) and 4 variables (columns) - Each variable (column) has a name (e.g.

sample_id). This is followed by the object mode (e.g. chr, int, etc.). Notice that before each variable name there is a$- this will be important later.

Introducing Factors

Factors are the final major data structure we will introduce in our R genomics lessons. Factors can be thought of as vectors which are specialized for categorical data. Given R’s specialization for statistics, this make sense since categorial and continuous variables are usually treated differently. Sometimes you may want to have data treated as a factor, but in other cases, this may be undesirable.

Let’s see the value of treating some of which are categorical in nature as factors. Let’s take a look at just the alternate alleles

## extract the "ALT" column to a new object

alt_alleles <- subset$ALT

Let’s look at the first few items in our factor using head():

head(alt_alleles)

[1] "G" "T" "T" "CTTTTTTTT" "CCGCGC" "T"

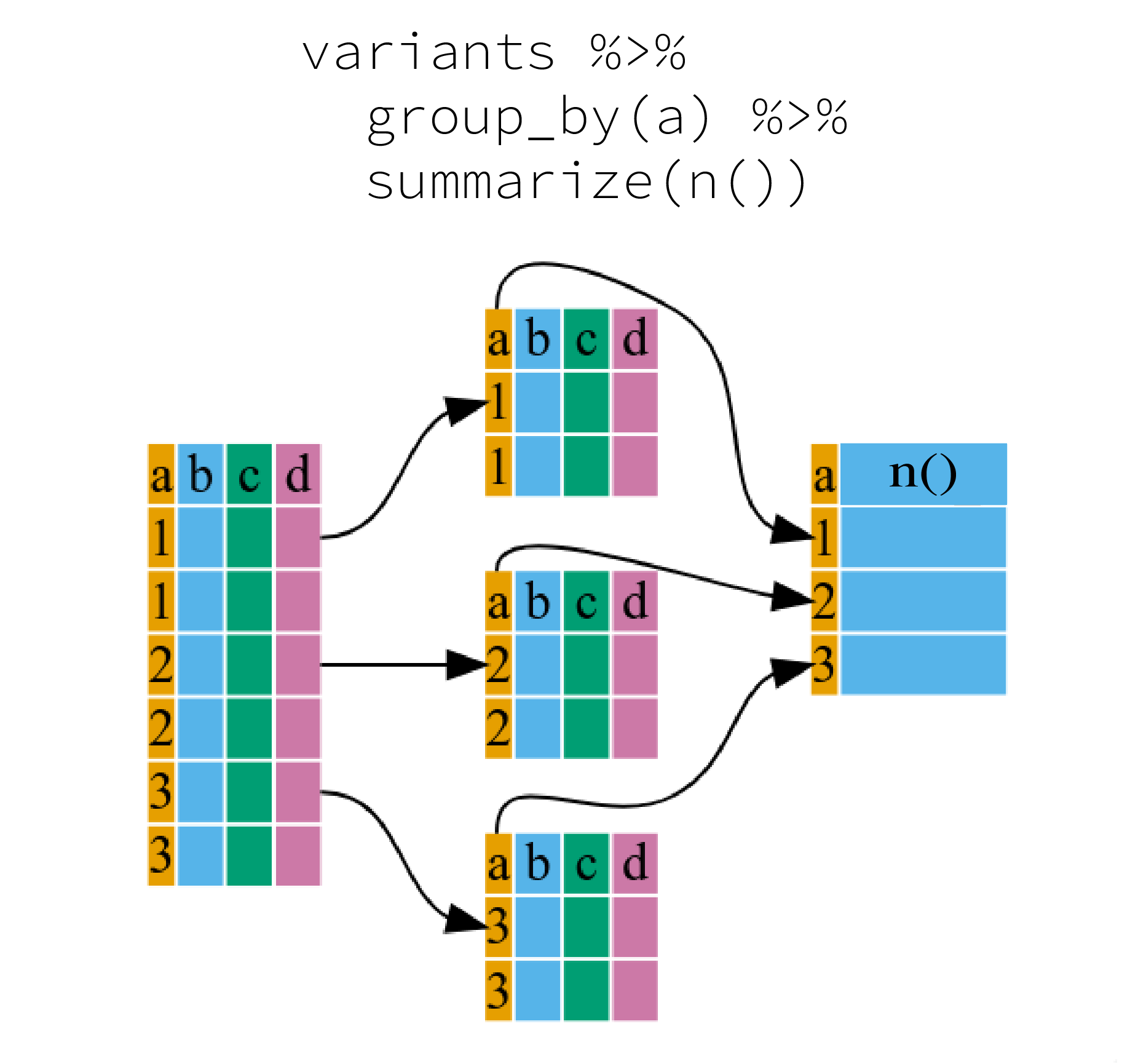

There are 801 alleles (one for each row). To simplify, lets look at just the single-nuleotide alleles (SNPs). We can use some of the vector indexing skills from the last episode.

snps <- c(alt_alleles[alt_alleles=="A"],

alt_alleles[alt_alleles=="T"],

alt_alleles[alt_alleles=="G"],

alt_alleles[alt_alleles=="C"])

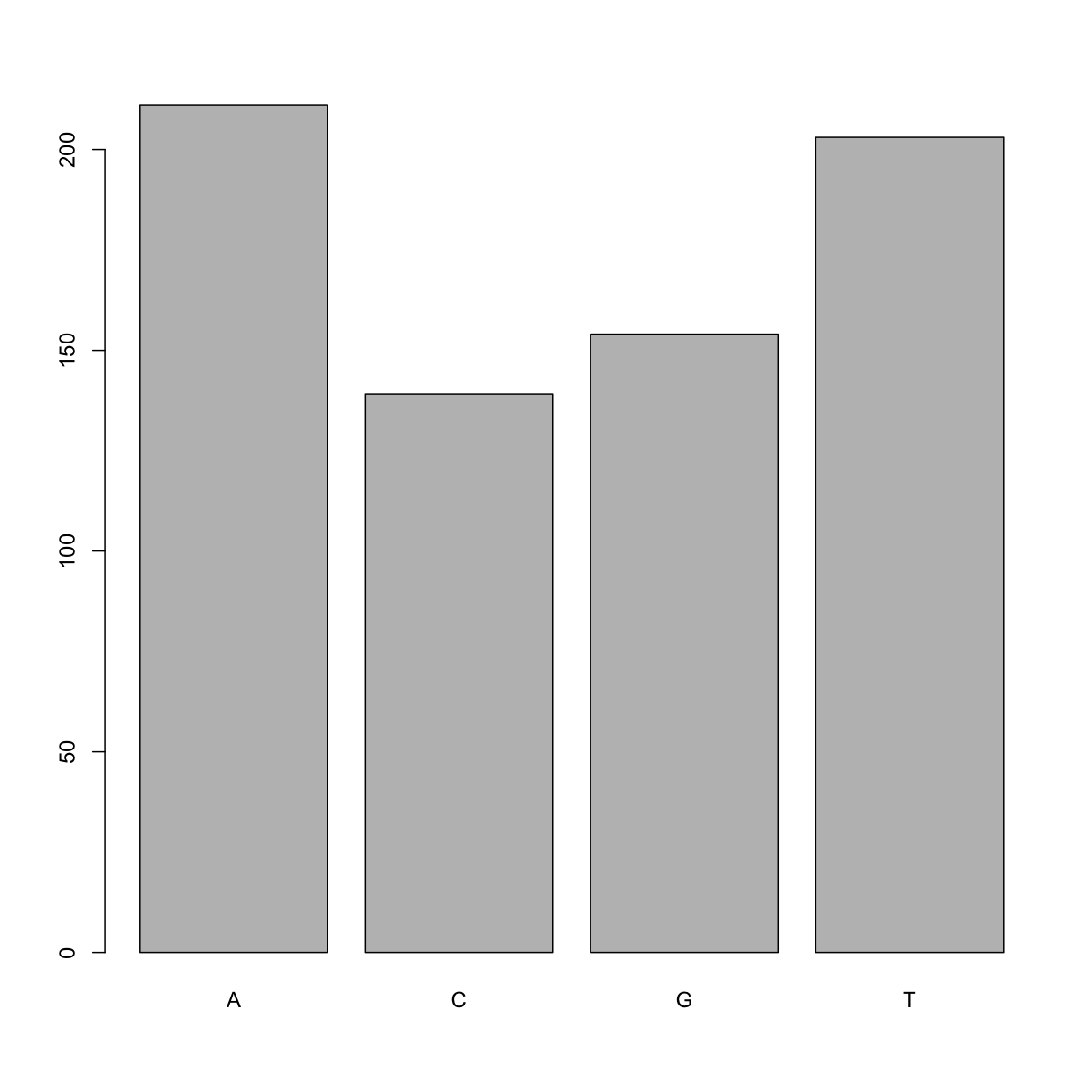

This leaves us with a vector of the 701 alternative alleles which were single nucleotides. Right now, they are being treated a characters, but we could treat them as categories of SNP. Doing this will enable some nice features. For example, we can try to generate a plot of this character vector as it is right now:

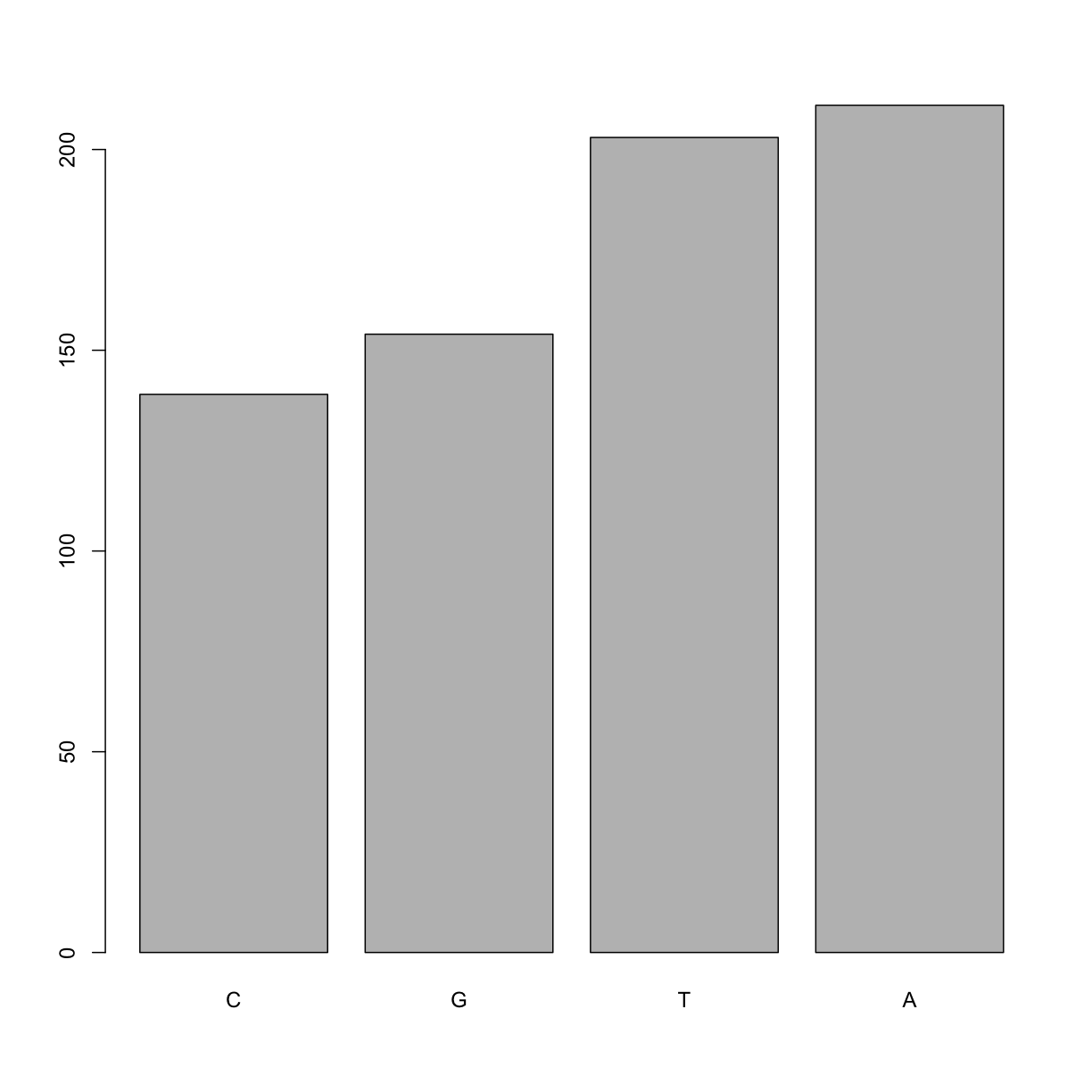

plot(snps)

Warning in xy.coords(x, y, xlabel, ylabel, log): NAs introduced by coercion

Warning in min(x): no non-missing arguments to min; returning Inf

Warning in max(x): no non-missing arguments to max; returning -Inf

Error in plot.window(...): need finite 'ylim' values

Whoops! Though the plot() function will do its best to give us a quick plot,

it is unable to do so here. One way to fix this it to tell R to treat the SNPs

as categories (i.e. a factor vector); we will create a new object to avoid

confusion using the factor() function:

factor_snps <- factor(snps)

Let’s learn a little more about this new type of vector:

str(factor_snps)

Factor w/ 4 levels "A","C","G","T": 1 1 1 1 1 1 1 1 1 1 ...

What we get back are the categories (“A”,”C”,”G”,”T”) in our factor; these are called “Levels”. Levels are the different categories contained in a factor. By default, R will organize the levels in a factor in alphabetical order. So the first level in this factor is “A”.

For the sake of efficiency, R stores the content of a factor as a vector of

integers, which an integer is assigned to each of the possible levels. Recall

levels are assigned in alphabetical order. In this case, the first item in our

factor_snps object is “A”, which happens to be the 1st level of our factor,

ordered alphabetically. This explains the sequence of “1”s (“Factor w/ 4 levels

“A”,”C”,”G”,”T”: 1 1 1 1 1 1 1 1 1 1 …”), since “A” is the first level, and

the first few items in our factor are all “A”s.

We can see how many items in our vector fall into each category:

summary(factor_snps)

A C G T

211 139 154 203

As you can imagine, this is already useful when you want to generate a tally.

Tip: treating objects as categories without changing their mode

You don’t have to make an object a factor to get the benefits of treating an object as a factor. See what happens when you use the

as.factor()function onfactor_snps. To generate a tally, you can sometimes also use thetable()function; though sometimes you may need to combine both (i.e.table(as.factor(object)))

Plotting and ordering factors

One of the most common uses for factors will be when you plot categorical values. For example, suppose we want to know how many of our variants had each possible SNP we could generate a plot:

plot(factor_snps)

This isn’t a particularly pretty example of a plot but it works. We’ll be learning much more about creating nice, publication-quality graphics later in this lesson.

If you recall, factors are ordered alphabetically. That might make sense, but categories (e.g., “red”, “blue”, “green”) often do not have an intrinsic order. What if we wanted to order our plot according to the numerical value (i.e., in descending order of SNP frequency)? We can enforce an order on our factors:

ordered_factor_snps <- factor(factor_snps, levels = names(sort(table(factor_snps))))

Let’s deconstruct this from the inside out (you can try each of these commands to see why this works):

- We create a table of

factor_snpsto get the frequency of each SNP:table(factor_snps) - We sort this table:

sort(table(factor_snps)); use thedecreasing =parameter for this function if you wanted to change from the default of FALSE - Using the

namesfunction gives us just the character names of the table sorted by frequencies:names(sort(table(factor_snps))) - The

factorfunction is what allows us to create a factor. We give it thefactor_snpsobject as input, and use thelevels=parameter to enforce the ordering of the levels.

Now we see our plot has be reordered:

plot(ordered_factor_snps)

Factors come in handy in many places when using R. Even using more sophisticated plotting packages such as ggplot2 will sometimes require you to understand how to manipulate factors.

Subsetting data frames

Next, we are going to talk about how you can get specific values from data frames, and where necessary, change the mode of a column of values.

The first thing to remember is that a data frame is two-dimensional (rows and

columns). Therefore, to select a specific value we will will once again use

[] (bracket) notation, but we will specify more than one value (except in some cases

where we are taking a range).

Exercise: Subsetting a data frame

Try the following indices and functions and try to figure out what they return

a.

variants[1,1]b.

variants[2,4]c.

variants[801,29]d.

variants[2, ]e.

variants[-1, ]f.

variants[1:4,1]g.

variants[1:10,c("REF","ALT")]h.

variants[,c("sample_id")]i.

head(variants)j.

tail(variants)k.

variants$sample_idl.

variants[variants$REF == "A",]Solution

a.

variants[1,1] #returns value in first row, first column[1] "SRR2584863"b.

variants[2,4] #returns value in second row, fourth column[1] NAc.

variants[801,29] #returns value in 801st row, 29th column (i.e. the vlue in last row and column - remember our dataset has 801 observations of 2 variables)[1] "T"d.

variants[2, ] #returns entire second rowsample_id CHROM POS ID REF ALT QUAL FILTER INDEL IDV IMF DP VDB 2 SRR2584863 CP000819.1 263235 NA G T 85 NA FALSE NA NA 6 0.096133 RPB MQB BQB MQSB SGB MQ0F ICB HOB AC AN DP4 MQ 2 1 1 1 NA -0.590765 0.166667 NA NA 1 1 0,1,0,5 33 Indiv gt_PL 2 /home/dcuser/dc_workshop/results/bam/SRR2584863.aligned.sorted.bam 112,0 gt_GT gt_GT_alleles 2 1 Te.

variants[-1, ] #returns all rows but the firstsample_id CHROM POS ID REF ALT QUAL FILTER INDEL IDV IMF 2 SRR2584863 CP000819.1 263235 NA G T 85 NA FALSE NA NA 3 SRR2584863 CP000819.1 281923 NA G T 217 NA FALSE NA NA 4 SRR2584863 CP000819.1 433359 NA CTTTTTTT CTTTTTTTT 64 NA TRUE 12 1.0 5 SRR2584863 CP000819.1 473901 NA CCGC CCGCGC 228 NA TRUE 9 0.9 6 SRR2584863 CP000819.1 648692 NA C T 210 NA FALSE NA NA 7 SRR2584863 CP000819.1 1331794 NA C A 178 NA FALSE NA NA DP VDB RPB MQB BQB MQSB SGB MQ0F ICB HOB AC AN DP4 MQ 2 6 0.096133 1 1 1 NA -0.590765 0.166667 NA NA 1 1 0,1,0,5 33 3 10 0.774083 NA NA NA 0.974597 -0.662043 0.000000 NA NA 1 1 0,0,4,5 60 4 12 0.477704 NA NA NA 1.000000 -0.676189 0.000000 NA NA 1 1 0,1,3,8 60 5 10 0.659505 NA NA NA 0.916482 -0.662043 0.000000 NA NA 1 1 1,0,2,7 60 6 10 0.268014 NA NA NA 0.916482 -0.670168 0.000000 NA NA 1 1 0,0,7,3 60 7 8 0.624078 NA NA NA 0.900802 -0.651104 0.000000 NA NA 1 1 0,0,3,5 60 Indiv gt_PL 2 /home/dcuser/dc_workshop/results/bam/SRR2584863.aligned.sorted.bam 112,0 3 /home/dcuser/dc_workshop/results/bam/SRR2584863.aligned.sorted.bam 247,0 4 /home/dcuser/dc_workshop/results/bam/SRR2584863.aligned.sorted.bam 91,0 5 /home/dcuser/dc_workshop/results/bam/SRR2584863.aligned.sorted.bam 255,0 6 /home/dcuser/dc_workshop/results/bam/SRR2584863.aligned.sorted.bam 240,0 7 /home/dcuser/dc_workshop/results/bam/SRR2584863.aligned.sorted.bam 208,0 gt_GT gt_GT_alleles 2 1 T 3 1 T 4 1 CTTTTTTTT 5 1 CCGCGC 6 1 T 7 1 Af.

variants[1:4,1] #returns first through fourth rows of the first column.[1] "SRR2584863" "SRR2584863" "SRR2584863" "SRR2584863"g.

variants[1:10,c("REF","ALT")] #returns the first through 10th rows of te REF and ALT columnsREF 1 T 2 G 3 G 4 CTTTTTTT 5 CCGC 6 C 7 C 8 G 9 ACAGCCAGCCAGCCAGCCAGCCAGCCAGCCAG 10 AT ALT 1 G 2 T 3 T 4 CTTTTTTTT 5 CCGCGC 6 T 7 A 8 A 9 ACAGCCAGCCAGCCAGCCAGCCAGCCAGCCAGCCAGCCAGCCAGCCAGCCAGCCAG 10 ATTh.

variants[,c("sample_id")] #returns the entire sample_id column[1] "SRR2584863" "SRR2584863" "SRR2584863" "SRR2584863" "SRR2584863" [6] "SRR2584863"i.

head(variants) #returns the first 6 rowssample_id CHROM POS ID REF ALT QUAL FILTER INDEL IDV IMF 1 SRR2584863 CP000819.1 9972 NA T G 91 NA FALSE NA NA 2 SRR2584863 CP000819.1 263235 NA G T 85 NA FALSE NA NA 3 SRR2584863 CP000819.1 281923 NA G T 217 NA FALSE NA NA 4 SRR2584863 CP000819.1 433359 NA CTTTTTTT CTTTTTTTT 64 NA TRUE 12 1.0 5 SRR2584863 CP000819.1 473901 NA CCGC CCGCGC 228 NA TRUE 9 0.9 6 SRR2584863 CP000819.1 648692 NA C T 210 NA FALSE NA NA DP VDB RPB MQB BQB MQSB SGB MQ0F ICB HOB AC AN DP4 MQ 1 4 0.0257451 NA NA NA NA -0.556411 0.000000 NA NA 1 1 0,0,0,4 60 2 6 0.0961330 1 1 1 NA -0.590765 0.166667 NA NA 1 1 0,1,0,5 33 3 10 0.7740830 NA NA NA 0.974597 -0.662043 0.000000 NA NA 1 1 0,0,4,5 60 4 12 0.4777040 NA NA NA 1.000000 -0.676189 0.000000 NA NA 1 1 0,1,3,8 60 5 10 0.6595050 NA NA NA 0.916482 -0.662043 0.000000 NA NA 1 1 1,0,2,7 60 6 10 0.2680140 NA NA NA 0.916482 -0.670168 0.000000 NA NA 1 1 0,0,7,3 60 Indiv gt_PL 1 /home/dcuser/dc_workshop/results/bam/SRR2584863.aligned.sorted.bam 121,0 2 /home/dcuser/dc_workshop/results/bam/SRR2584863.aligned.sorted.bam 112,0 3 /home/dcuser/dc_workshop/results/bam/SRR2584863.aligned.sorted.bam 247,0 4 /home/dcuser/dc_workshop/results/bam/SRR2584863.aligned.sorted.bam 91,0 5 /home/dcuser/dc_workshop/results/bam/SRR2584863.aligned.sorted.bam 255,0 6 /home/dcuser/dc_workshop/results/bam/SRR2584863.aligned.sorted.bam 240,0 gt_GT gt_GT_alleles 1 1 G 2 1 T 3 1 T 4 1 CTTTTTTTT 5 1 CCGCGC 6 1 Tj.

tail(variants) #returns the last 6 rowssample_id CHROM POS ID REF ALT QUAL FILTER INDEL IDV IMF DP 796 SRR2589044 CP000819.1 3444175 NA G T 184 NA FALSE NA NA 9 797 SRR2589044 CP000819.1 3481820 NA A G 225 NA FALSE NA NA 12 798 SRR2589044 CP000819.1 3893550 NA AG AGG 101 NA TRUE 4 1 4 799 SRR2589044 CP000819.1 3901455 NA A AC 70 NA TRUE 3 1 3 800 SRR2589044 CP000819.1 4100183 NA A G 177 NA FALSE NA NA 8 801 SRR2589044 CP000819.1 4431393 NA TGG T 225 NA TRUE 10 1 10 VDB RPB MQB BQB MQSB SGB MQ0F ICB HOB AC AN DP4 MQ 796 0.4714620 NA NA NA 0.992367 -0.651104 0 NA NA 1 1 0,0,4,4 60 797 0.8707240 NA NA NA 1.000000 -0.680642 0 NA NA 1 1 0,0,4,8 60 798 0.9182970 NA NA NA 1.000000 -0.556411 0 NA NA 1 1 0,0,3,1 52 799 0.0221621 NA NA NA NA -0.511536 0 NA NA 1 1 0,0,3,0 60 800 0.9272700 NA NA NA 0.900802 -0.651104 0 NA NA 1 1 0,0,3,5 60 801 0.7488140 NA NA NA 1.007750 -0.670168 0 NA NA 1 1 0,0,4,6 60 Indiv gt_PL 796 /home/dcuser/dc_workshop/results/bam/SRR2589044.aligned.sorted.bam 214,0 797 /home/dcuser/dc_workshop/results/bam/SRR2589044.aligned.sorted.bam 255,0 798 /home/dcuser/dc_workshop/results/bam/SRR2589044.aligned.sorted.bam 131,0 799 /home/dcuser/dc_workshop/results/bam/SRR2589044.aligned.sorted.bam 100,0 800 /home/dcuser/dc_workshop/results/bam/SRR2589044.aligned.sorted.bam 207,0 801 /home/dcuser/dc_workshop/results/bam/SRR2589044.aligned.sorted.bam 255,0 gt_GT gt_GT_alleles 796 1 T 797 1 G 798 1 AGG 799 1 AC 800 1 G 801 1 Tk.

variants$sample_id #returns the sample_id column as a vector[1] "SRR2584863" "SRR2584863" "SRR2584863" "SRR2584863" "SRR2584863" [6] "SRR2584863"l.

variants[variants$REF == "A",] #returns rows in which the REF column has te value "A" as a vectorsample_id CHROM POS ID REF ALT QUAL FILTER INDEL IDV IMF DP 11 SRR2584863 CP000819.1 2407766 NA A C 104 NA FALSE NA NA 9 12 SRR2584863 CP000819.1 2446984 NA A C 225 NA FALSE NA NA 20 14 SRR2584863 CP000819.1 2665639 NA A T 225 NA FALSE NA NA 19 16 SRR2584863 CP000819.1 3339313 NA A C 211 NA FALSE NA NA 10 18 SRR2584863 CP000819.1 3481820 NA A G 200 NA FALSE NA NA 9 19 SRR2584863 CP000819.1 3488669 NA A C 225 NA FALSE NA NA 13 VDB RPB MQB BQB MQSB SGB MQ0F ICB HOB AC 11 0.0230738 0.900802 0.150134 0.750668 0.500000 -0.590765 0.333333 NA NA 1 12 0.0714027 NA NA NA 1.000000 -0.689466 0.000000 NA NA 1 14 0.9960390 NA NA NA 1.000000 -0.690438 0.000000 NA NA 1 16 0.4059360 NA NA NA 1.007750 -0.670168 0.000000 NA NA 1 18 0.1070810 NA NA NA 0.974597 -0.662043 0.000000 NA NA 1 19 0.0162706 NA NA NA 1.000000 -0.680642 0.000000 NA NA 1 AN DP4 MQ 11 1 3,0,3,2 25 12 1 0,0,10,6 60 14 1 0,0,12,5 60 16 1 0,0,4,6 60 18 1 0,0,4,5 60 19 1 0,0,8,4 60 Indiv gt_PL 11 /home/dcuser/dc_workshop/results/bam/SRR2584863.aligned.sorted.bam 131,0 12 /home/dcuser/dc_workshop/results/bam/SRR2584863.aligned.sorted.bam 255,0 14 /home/dcuser/dc_workshop/results/bam/SRR2584863.aligned.sorted.bam 255,0 16 /home/dcuser/dc_workshop/results/bam/SRR2584863.aligned.sorted.bam 241,0 18 /home/dcuser/dc_workshop/results/bam/SRR2584863.aligned.sorted.bam 230,0 19 /home/dcuser/dc_workshop/results/bam/SRR2584863.aligned.sorted.bam 255,0 gt_GT gt_GT_alleles 11 1 C 12 1 C 14 1 T 16 1 C 18 1 G 19 1 C

The subsetting notation is very similar to what we learned for vectors. The key differences include:

- Typically provide two values separated by commas: data.frame[row, column]

- In cases where you are taking a continuous range of numbers use a colon between the numbers (start:stop, inclusive)

- For a non continuous set of numbers, pass a vector using

c() - Index using the name of a column(s) by passing them as vectors using

c()

Finally, in all of the subsetting exercises above, we printed values to the screen. You can create a new data frame object by assigning them to a new object name:

# create a new data frame containing only observations from SRR2584863

SRR2584863_variants <- variants[variants$sample_id == "SRR2584863",]

# check the dimension of the data frame

dim(SRR2584863_variants)

[1] 25 29

# get a summary of the data frame

summary(SRR2584863_variants)

sample_id CHROM POS ID

Length:25 Length:25 Min. : 9972 Mode:logical

Class :character Class :character 1st Qu.:1331794 NA's:25

Mode :character Mode :character Median :2618472

Mean :2464989

3rd Qu.:3488669

Max. :4616538

REF ALT QUAL FILTER

Length:25 Length:25 Min. : 31.89 Mode:logical

Class :character Class :character 1st Qu.:104.00 NA's:25

Mode :character Mode :character Median :211.00

Mean :172.97

3rd Qu.:225.00

Max. :228.00

INDEL IDV IMF DP

Mode :logical Min. : 2.00 Min. :0.6667 Min. : 2.0

FALSE:19 1st Qu.: 3.25 1st Qu.:0.9250 1st Qu.: 9.0

TRUE :6 Median : 8.00 Median :1.0000 Median :10.0

Mean : 7.00 Mean :0.9278 Mean :10.4

3rd Qu.: 9.75 3rd Qu.:1.0000 3rd Qu.:12.0

Max. :12.00 Max. :1.0000 Max. :20.0

NA's :19 NA's :19

VDB RPB MQB BQB

Min. :0.01627 Min. :0.9008 Min. :0.04979 Min. :0.7507

1st Qu.:0.07140 1st Qu.:0.9275 1st Qu.:0.09996 1st Qu.:0.7627

Median :0.37674 Median :0.9542 Median :0.15013 Median :0.7748

Mean :0.40429 Mean :0.9517 Mean :0.39997 Mean :0.8418

3rd Qu.:0.65951 3rd Qu.:0.9771 3rd Qu.:0.57507 3rd Qu.:0.8874

Max. :0.99604 Max. :1.0000 Max. :1.00000 Max. :1.0000

NA's :22 NA's :22 NA's :22

MQSB SGB MQ0F ICB

Min. :0.5000 Min. :-0.6904 Min. :0.00000 Mode:logical

1st Qu.:0.9599 1st Qu.:-0.6762 1st Qu.:0.00000 NA's:25

Median :0.9962 Median :-0.6620 Median :0.00000

Mean :0.9442 Mean :-0.6341 Mean :0.04667

3rd Qu.:1.0000 3rd Qu.:-0.6168 3rd Qu.:0.00000

Max. :1.0128 Max. :-0.4536 Max. :0.66667

NA's :3

HOB AC AN DP4 MQ

Mode:logical Min. :1 Min. :1 Length:25 Min. :10.00

NA's:25 1st Qu.:1 1st Qu.:1 Class :character 1st Qu.:60.00

Median :1 Median :1 Mode :character Median :60.00

Mean :1 Mean :1 Mean :55.52

3rd Qu.:1 3rd Qu.:1 3rd Qu.:60.00

Max. :1 Max. :1 Max. :60.00

Indiv gt_PL gt_GT gt_GT_alleles

Length:25 Length:25 Min. :1 Length:25

Class :character Class :character 1st Qu.:1 Class :character

Mode :character Mode :character Median :1 Mode :character

Mean :1

3rd Qu.:1

Max. :1

Coercing values in data frames

Tip: coercion isn’t limited to data frames

While we are going to address coercion in the context of data frames most of these methods apply to other data structures, such as vectors

Sometimes, it is possible that R will misinterpret the type of data represented in a data frame, or store that data in a mode which prevents you from operating on the data the way you wish. For example, a long list of gene names isn’t usually thought of as a categorical variable, the way that your experimental condition (e.g. control, treatment) might be. More importantly, some R packages you use to analyze your data may expect characters as input, not factors. At other times (such as plotting or some statistical analyses) a factor may be more appropriate. Ultimately, you should know how to change the mode of an object.

First, its very important to recognize that coercion happens in R all the time. This can be a good thing when R gets it right, or a bad thing when the result is not what you expect. Consider:

snp_chromosomes <- c('3', '11', 'X', '6')

typeof(snp_chromosomes)

[1] "character"

Although there are several numbers in our vector, they are all in quotes, so we have explicitly told R to consider them as characters. However, even if we removed the quotes from the numbers, R would coerce everything into a character:

snp_chromosomes_2 <- c(3, 11, 'X', 6)

typeof(snp_chromosomes_2)

[1] "character"

snp_chromosomes_2[1]

[1] "3"

We can use the as. functions to explicitly coerce values from one form into

another. Consider the following vector of characters, which all happen to be

valid numbers:

snp_positions_2 <- c("8762685", "66560624", "67545785", "154039662")

typeof(snp_positions_2)

[1] "character"

snp_positions_2[1]

[1] "8762685"

Now we can coerce snp_positions_2 into a numeric type using as.numeric():

snp_positions_2 <- as.numeric(snp_positions_2)

typeof(snp_positions_2)

[1] "double"

snp_positions_2[1]

[1] 8762685

Sometimes coercion is straight forward, but what would happen if we tried

using as.numeric() on snp_chromosomes_2

snp_chromosomes_2 <- as.numeric(snp_chromosomes_2)